Spaces:

Runtime error

Runtime error

Upload folder using huggingface_hub

Browse filesThis view is limited to 50 files because it contains too many changes.

See raw diff

- .gitattributes +14 -0

- .gitignore +15 -0

- .gitmodules +10 -0

- ASR/FunASR.py +54 -0

- ASR/README.md +77 -0

- ASR/Whisper.py +129 -0

- ASR/__init__.py +4 -0

- ASR/requirements_funasr.txt +3 -0

- AutoDL部署.md +215 -0

- ChatTTS/.gitignore +163 -0

- ChatTTS/ChatTTS/__init__.py +1 -0

- ChatTTS/ChatTTS/core.py +200 -0

- ChatTTS/ChatTTS/experimental/llm.py +40 -0

- ChatTTS/ChatTTS/infer/api.py +125 -0

- ChatTTS/ChatTTS/model/dvae.py +155 -0

- ChatTTS/ChatTTS/model/gpt.py +265 -0

- ChatTTS/ChatTTS/utils/gpu_utils.py +23 -0

- ChatTTS/ChatTTS/utils/infer_utils.py +141 -0

- ChatTTS/ChatTTS/utils/io_utils.py +14 -0

- ChatTTS/LICENSE +407 -0

- ChatTTS/README.md +132 -0

- ChatTTS/README_CN.md +136 -0

- ChatTTS/example.ipynb +0 -0

- ChatTTS/requirements.txt +8 -0

- ChatTTS/webui.py +113 -0

- CosyVoice/.github/ISSUE_TEMPLATE/bug_report.md +38 -0

- CosyVoice/.github/ISSUE_TEMPLATE/feature_request.md +20 -0

- CosyVoice/.gitignore +49 -0

- CosyVoice/.gitmodules +3 -0

- CosyVoice/CODE_OF_CONDUCT.md +76 -0

- CosyVoice/FAQ.md +16 -0

- CosyVoice/LICENSE +201 -0

- CosyVoice/README.md +189 -0

- CosyVoice/asset/dingding.png +0 -0

- CosyVoice/cosyvoice/__init__.py +0 -0

- CosyVoice/cosyvoice/bin/inference.py +114 -0

- CosyVoice/cosyvoice/bin/train.py +136 -0

- CosyVoice/cosyvoice/cli/__init__.py +0 -0

- CosyVoice/cosyvoice/cli/cosyvoice.py +83 -0

- CosyVoice/cosyvoice/cli/frontend.py +168 -0

- CosyVoice/cosyvoice/cli/model.py +60 -0

- CosyVoice/cosyvoice/dataset/__init__.py +0 -0

- CosyVoice/cosyvoice/dataset/dataset.py +160 -0

- CosyVoice/cosyvoice/dataset/processor.py +369 -0

- CosyVoice/cosyvoice/flow/decoder.py +222 -0

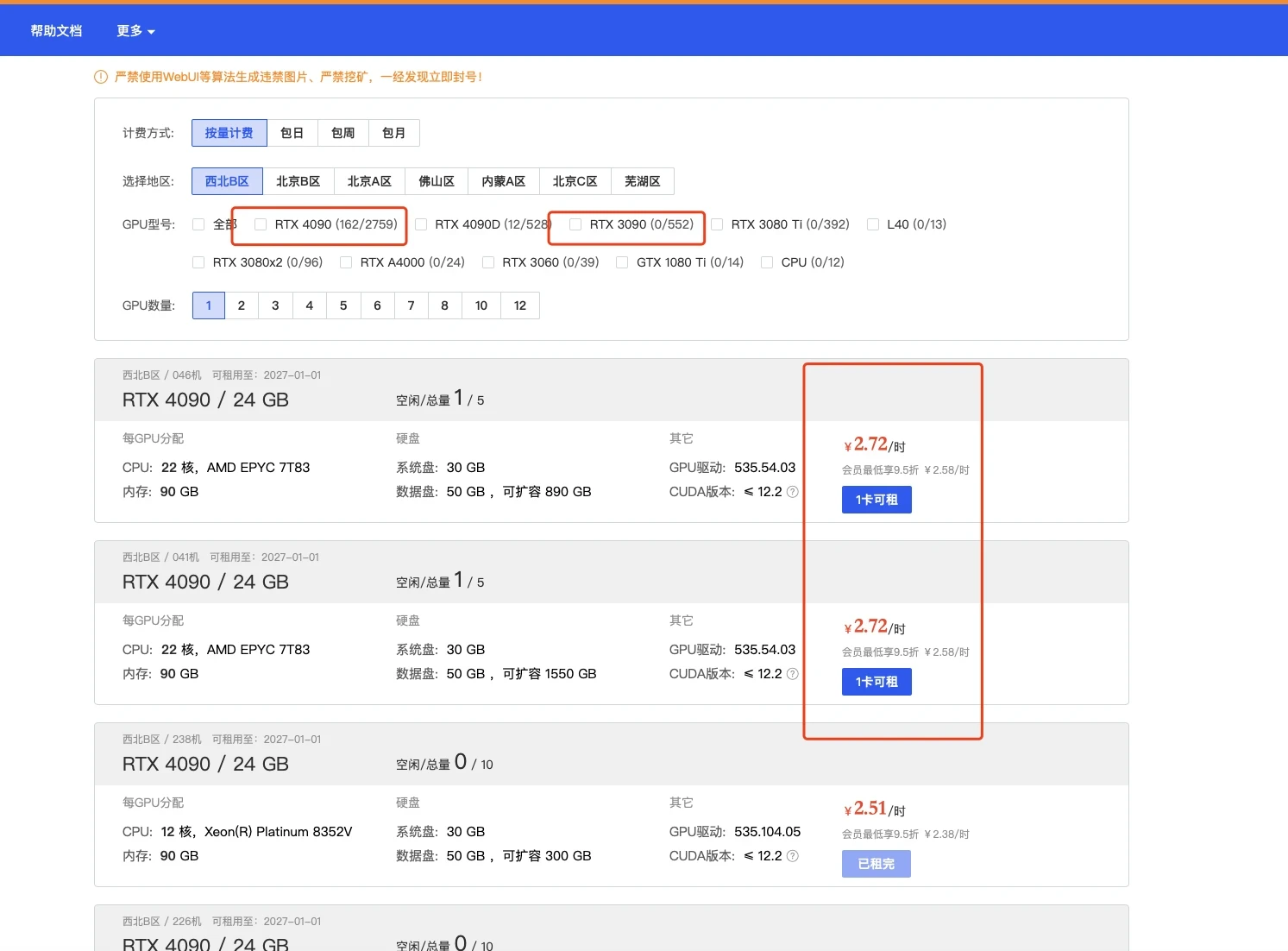

- CosyVoice/cosyvoice/flow/flow.py +141 -0

- CosyVoice/cosyvoice/flow/flow_matching.py +138 -0

- CosyVoice/cosyvoice/flow/length_regulator.py +49 -0

- CosyVoice/cosyvoice/hifigan/f0_predictor.py +55 -0

- CosyVoice/cosyvoice/hifigan/generator.py +391 -0

.gitattributes

CHANGED

|

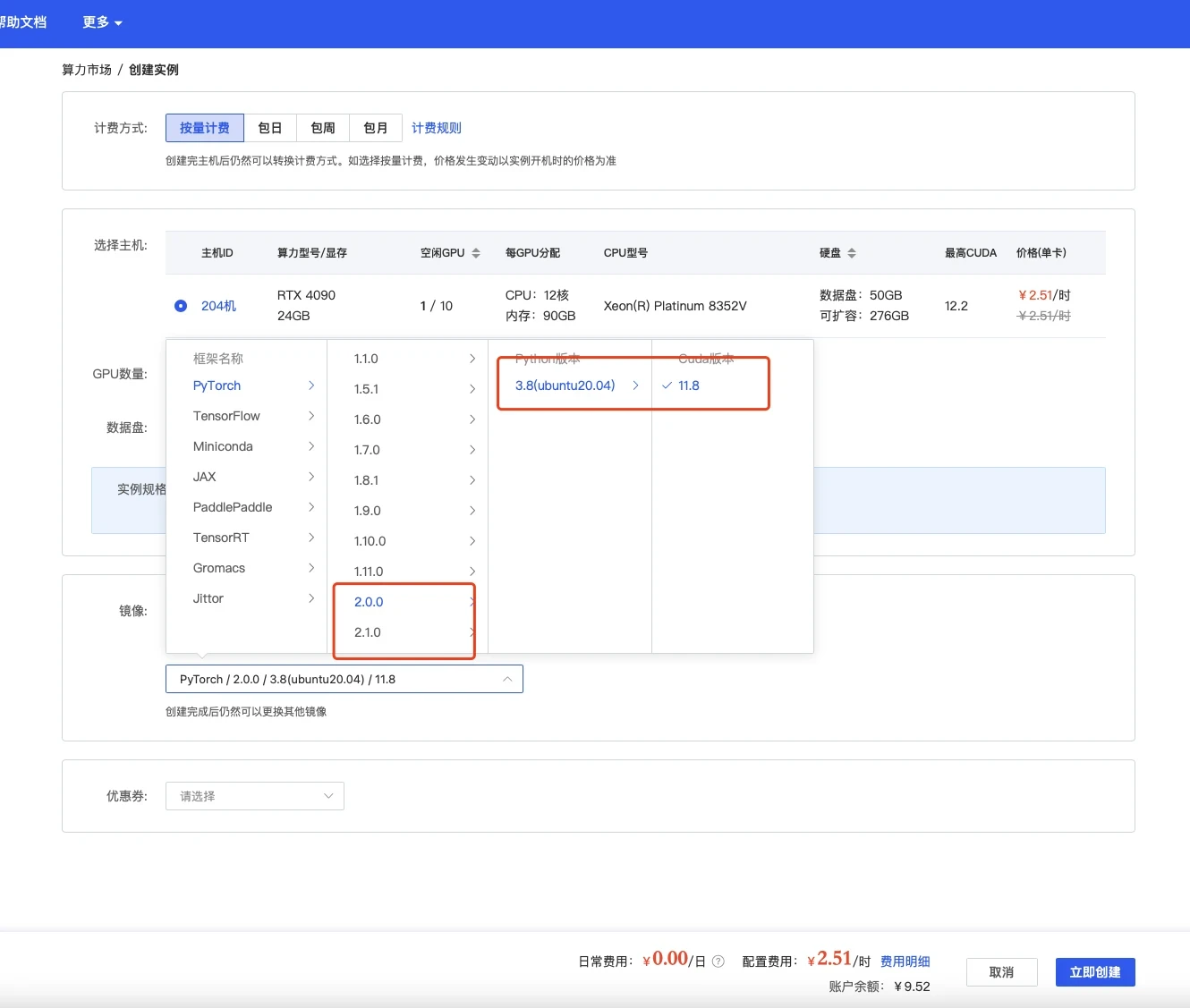

@@ -33,3 +33,17 @@ saved_model/**/* filter=lfs diff=lfs merge=lfs -text

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

|

|

|

|

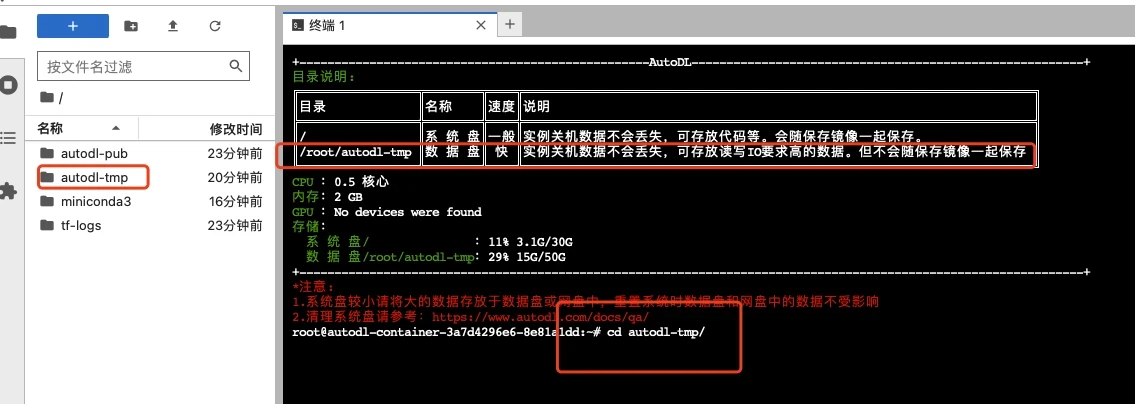

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

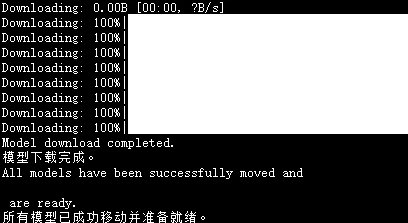

|

|

|

|

|

|

|

|

|

|

|

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

| 36 |

+

Musetalk/data/video/man_musev.mp4 filter=lfs diff=lfs merge=lfs -text

|

| 37 |

+

Musetalk/data/video/monalisa_musev.mp4 filter=lfs diff=lfs merge=lfs -text

|

| 38 |

+

Musetalk/data/video/seaside4_musev.mp4 filter=lfs diff=lfs merge=lfs -text

|

| 39 |

+

Musetalk/data/video/sit_musev.mp4 filter=lfs diff=lfs merge=lfs -text

|

| 40 |

+

Musetalk/data/video/sun_musev.mp4 filter=lfs diff=lfs merge=lfs -text

|

| 41 |

+

Musetalk/data/video/yongen_musev.mp4 filter=lfs diff=lfs merge=lfs -text

|

| 42 |

+

examples/source_image/art_16.png filter=lfs diff=lfs merge=lfs -text

|

| 43 |

+

examples/source_image/art_17.png filter=lfs diff=lfs merge=lfs -text

|

| 44 |

+

examples/source_image/art_3.png filter=lfs diff=lfs merge=lfs -text

|

| 45 |

+

examples/source_image/art_4.png filter=lfs diff=lfs merge=lfs -text

|

| 46 |

+

examples/source_image/art_5.png filter=lfs diff=lfs merge=lfs -text

|

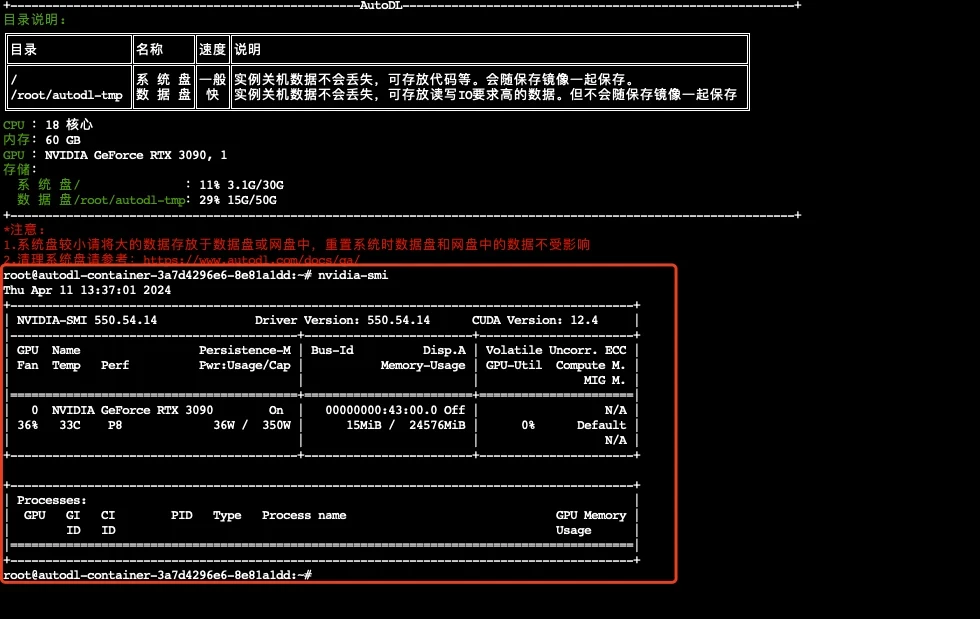

| 47 |

+

examples/source_image/art_8.png filter=lfs diff=lfs merge=lfs -text

|

| 48 |

+

examples/source_image/art_9.png filter=lfs diff=lfs merge=lfs -text

|

| 49 |

+

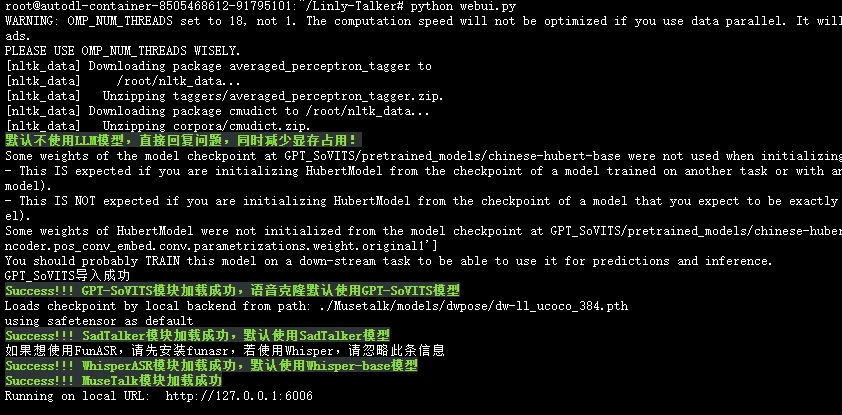

inputs/boy.png filter=lfs diff=lfs merge=lfs -text

|

.gitignore

ADDED

|

@@ -0,0 +1,15 @@

|

|

|

|

|

|

|

|

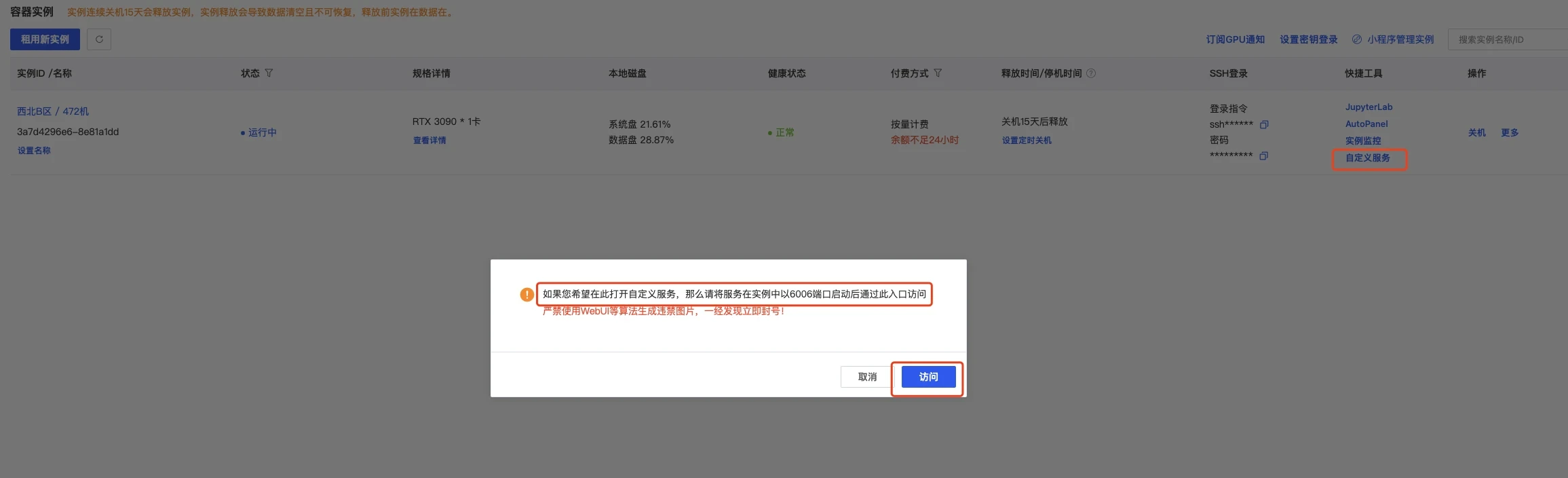

|

|

|

|

|

|

|

|

|

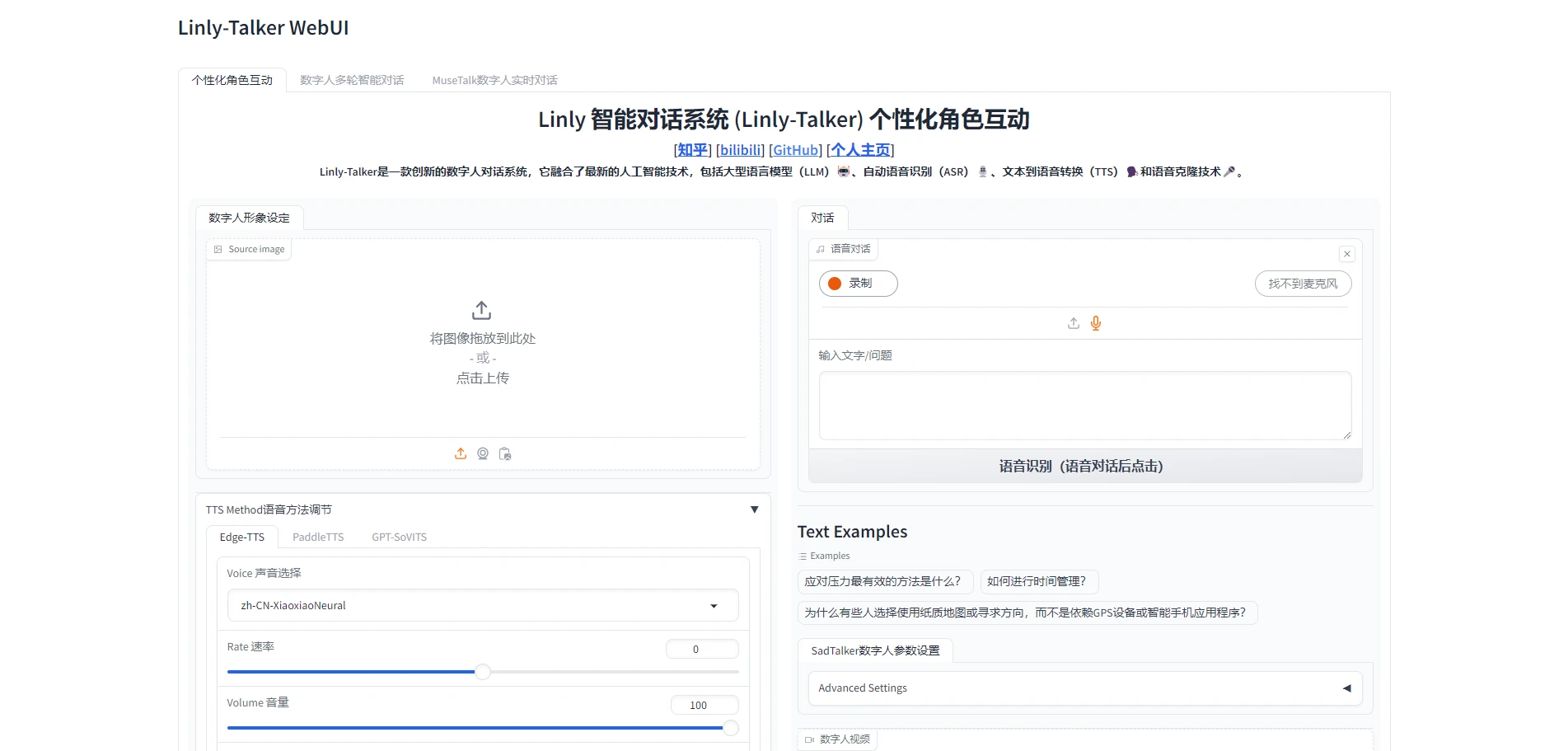

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

.DS_Store

|

| 2 |

+

checkpoints/

|

| 3 |

+

gfpgan/

|

| 4 |

+

__pycache__/

|

| 5 |

+

*.pyc

|

| 6 |

+

Linly-AI

|

| 7 |

+

Qwen

|

| 8 |

+

checkpoints

|

| 9 |

+

temp

|

| 10 |

+

*.wav

|

| 11 |

+

*.vtt

|

| 12 |

+

*.srt

|

| 13 |

+

results/example_answer.mp4

|

| 14 |

+

request-Linly-api.py

|

| 15 |

+

results

|

.gitmodules

ADDED

|

@@ -0,0 +1,10 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

[submodule "MuseV"]

|

| 2 |

+

path = MuseV

|

| 3 |

+

url = https://github.com/TMElyralab/MuseV.git

|

| 4 |

+

|

| 5 |

+

[submodule "ChatTTS"]

|

| 6 |

+

path = ChatTTS

|

| 7 |

+

url = https://github.com/2noise/ChatTTS.git

|

| 8 |

+

[submodule "CosyVoice"]

|

| 9 |

+

path = CosyVoice

|

| 10 |

+

url = https://github.com/FunAudioLLM/CosyVoice.git

|

ASR/FunASR.py

ADDED

|

@@ -0,0 +1,54 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

'''

|

| 2 |

+

Reference: https://github.com/alibaba-damo-academy/FunASR

|

| 3 |

+

pip install funasr

|

| 4 |

+

pip install modelscope

|

| 5 |

+

pip install -U rotary_embedding_torch

|

| 6 |

+

'''

|

| 7 |

+

try:

|

| 8 |

+

from funasr import AutoModel

|

| 9 |

+

except:

|

| 10 |

+

print("如果想使用FunASR,请先安装funasr,若使用Whisper,请忽略此条信息")

|

| 11 |

+

import os

|

| 12 |

+

import sys

|

| 13 |

+

sys.path.append('./')

|

| 14 |

+

from src.cost_time import calculate_time

|

| 15 |

+

|

| 16 |

+

class FunASR:

|

| 17 |

+

def __init__(self) -> None:

|

| 18 |

+

# 定义模型的自定义路径

|

| 19 |

+

model_path = "FunASR/speech_seaco_paraformer_large_asr_nat-zh-cn-16k-common-vocab8404-pytorch"

|

| 20 |

+

vad_model_path = "FunASR/speech_fsmn_vad_zh-cn-16k-common-pytorch"

|

| 21 |

+

punc_model_path = "FunASR/punc_ct-transformer_zh-cn-common-vocab272727-pytorch"

|

| 22 |

+

|

| 23 |

+

# 检查文件是否存在于 FunASR 目录下

|

| 24 |

+

model_exists = os.path.exists(model_path)

|

| 25 |

+

vad_model_exists = os.path.exists(vad_model_path)

|

| 26 |

+

punc_model_exists = os.path.exists(punc_model_path)

|

| 27 |

+

# Modelscope AutoDownload

|

| 28 |

+

self.model = AutoModel(

|

| 29 |

+

model=model_path if model_exists else "paraformer-zh",

|

| 30 |

+

vad_model=vad_model_path if vad_model_exists else "fsmn-vad",

|

| 31 |

+

punc_model=punc_model_path if punc_model_exists else "ct-punc-c",

|

| 32 |

+

)

|

| 33 |

+

# 自定义路径

|

| 34 |

+

# self.model = AutoModel(model="FunASR/speech_seaco_paraformer_large_asr_nat-zh-cn-16k-common-vocab8404-pytorch", # model_revision="v2.0.4",

|

| 35 |

+

# vad_model="FunASR/speech_fsmn_vad_zh-cn-16k-common-pytorch", # vad_model_revision="v2.0.4",

|

| 36 |

+

# punc_model="FunASR/punc_ct-transformer_zh-cn-common-vocab272727-pytorch", # punc_model_revision="v2.0.4",

|

| 37 |

+

# # spk_model="cam++", spk_model_revision="v2.0.2",

|

| 38 |

+

# )

|

| 39 |

+

@calculate_time

|

| 40 |

+

def transcribe(self, audio_file):

|

| 41 |

+

res = self.model.generate(input=audio_file,

|

| 42 |

+

batch_size_s=300)

|

| 43 |

+

print(res)

|

| 44 |

+

return res[0]['text']

|

| 45 |

+

|

| 46 |

+

|

| 47 |

+

if __name__ == "__main__":

|

| 48 |

+

import os

|

| 49 |

+

# 创建ASR对象并进行语音识别

|

| 50 |

+

audio_file = "output.wav" # 音频文件路径

|

| 51 |

+

if not os.path.exists(audio_file):

|

| 52 |

+

os.system('edge-tts --text "hello" --write-media output.wav')

|

| 53 |

+

asr = FunASR()

|

| 54 |

+

print(asr.transcribe(audio_file))

|

ASR/README.md

ADDED

|

@@ -0,0 +1,77 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

## ASR 同数字人沟通的桥梁

|

| 2 |

+

|

| 3 |

+

### Whisper OpenAI

|

| 4 |

+

|

| 5 |

+

Whisper 是一个自动语音识别 (ASR) 系统,它使用从网络上收集的 680,000 小时多语言和多任务监督数据进行训练。使用如此庞大且多样化的数据集可以提高对口音、背景噪音和技术语言的鲁棒性。此外,它还支持多种语言的转录,以及将这些语言翻译成英语。

|

| 6 |

+

|

| 7 |

+

使用方法很简单,我们只要安装以下库,后续模型会自动下载

|

| 8 |

+

|

| 9 |

+

```bash

|

| 10 |

+

pip install -U openai-whisper

|

| 11 |

+

```

|

| 12 |

+

|

| 13 |

+

借鉴OpenAI的Whisper实现了ASR的语音识别,具体使用方法参考 [https://github.com/openai/whisper](https://github.com/openai/whisper)

|

| 14 |

+

|

| 15 |

+

```python

|

| 16 |

+

'''

|

| 17 |

+

https://github.com/openai/whisper

|

| 18 |

+

pip install -U openai-whisper

|

| 19 |

+

'''

|

| 20 |

+

import whisper

|

| 21 |

+

|

| 22 |

+

class WhisperASR:

|

| 23 |

+

def __init__(self, model_path):

|

| 24 |

+

self.LANGUAGES = {

|

| 25 |

+

"en": "english",

|

| 26 |

+

"zh": "chinese",

|

| 27 |

+

}

|

| 28 |

+

self.model = whisper.load_model(model_path)

|

| 29 |

+

|

| 30 |

+

def transcribe(self, audio_file):

|

| 31 |

+

result = self.model.transcribe(audio_file)

|

| 32 |

+

return result["text"]

|

| 33 |

+

```

|

| 34 |

+

|

| 35 |

+

|

| 36 |

+

|

| 37 |

+

### FunASR Alibaba

|

| 38 |

+

|

| 39 |

+

阿里的`FunASR`的语音识别效果也是相当不错,而且时间也是比whisper更快的,更能达到实时的效果,所以也将FunASR添加进去了,在ASR文件夹下的FunASR文件里可以进行体验,参考 [https://github.com/alibaba-damo-academy/FunASR](https://github.com/alibaba-damo-academy/FunASR)

|

| 40 |

+

|

| 41 |

+

需要注意的是,在第一次运行的时候,需要安装以下库。

|

| 42 |

+

|

| 43 |

+

```bash

|

| 44 |

+

pip install funasr

|

| 45 |

+

pip install modelscope

|

| 46 |

+

pip install -U rotary_embedding_torch

|

| 47 |

+

```

|

| 48 |

+

|

| 49 |

+

```python

|

| 50 |

+

'''

|

| 51 |

+

Reference: https://github.com/alibaba-damo-academy/FunASR

|

| 52 |

+

pip install funasr

|

| 53 |

+

pip install modelscope

|

| 54 |

+

pip install -U rotary_embedding_torch

|

| 55 |

+

'''

|

| 56 |

+

try:

|

| 57 |

+

from funasr import AutoModel

|

| 58 |

+

except:

|

| 59 |

+

print("如果想使用FunASR,请先安装funasr,若使用Whisper,请忽略此条信息")

|

| 60 |

+

|

| 61 |

+

class FunASR:

|

| 62 |

+

def __init__(self) -> None:

|

| 63 |

+

self.model = AutoModel(model="paraformer-zh", model_revision="v2.0.4",

|

| 64 |

+

vad_model="fsmn-vad", vad_model_revision="v2.0.4",

|

| 65 |

+

punc_model="ct-punc-c", punc_model_revision="v2.0.4",

|

| 66 |

+

# spk_model="cam++", spk_model_revision="v2.0.2",

|

| 67 |

+

)

|

| 68 |

+

|

| 69 |

+

def transcribe(self, audio_file):

|

| 70 |

+

res = self.model.generate(input=audio_file,

|

| 71 |

+

batch_size_s=300)

|

| 72 |

+

print(res)

|

| 73 |

+

return res[0]['text']

|

| 74 |

+

```

|

| 75 |

+

|

| 76 |

+

|

| 77 |

+

|

ASR/Whisper.py

ADDED

|

@@ -0,0 +1,129 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

'''

|

| 2 |

+

https://github.com/openai/whisper

|

| 3 |

+

pip install -U openai-whisper

|

| 4 |

+

'''

|

| 5 |

+

import whisper

|

| 6 |

+

import sys

|

| 7 |

+

sys.path.append('./')

|

| 8 |

+

from src.cost_time import calculate_time

|

| 9 |

+

|

| 10 |

+

class WhisperASR:

|

| 11 |

+

def __init__(self, model_path):

|

| 12 |

+

self.LANGUAGES = {

|

| 13 |

+

"en": "english",

|

| 14 |

+

"zh": "chinese",

|

| 15 |

+

"de": "german",

|

| 16 |

+

"es": "spanish",

|

| 17 |

+

"ru": "russian",

|

| 18 |

+

"ko": "korean",

|

| 19 |

+

"fr": "french",

|

| 20 |

+

"ja": "japanese",

|

| 21 |

+

"pt": "portuguese",

|

| 22 |

+

"tr": "turkish",

|

| 23 |

+

"pl": "polish",

|

| 24 |

+

"ca": "catalan",

|

| 25 |

+

"nl": "dutch",

|

| 26 |

+

"ar": "arabic",

|

| 27 |

+

"sv": "swedish",

|

| 28 |

+

"it": "italian",

|

| 29 |

+

"id": "indonesian",

|

| 30 |

+

"hi": "hindi",

|

| 31 |

+

"fi": "finnish",

|

| 32 |

+

"vi": "vietnamese",

|

| 33 |

+

"he": "hebrew",

|

| 34 |

+

"uk": "ukrainian",

|

| 35 |

+

"el": "greek",

|

| 36 |

+

"ms": "malay",

|

| 37 |

+

"cs": "czech",

|

| 38 |

+

"ro": "romanian",

|

| 39 |

+

"da": "danish",

|

| 40 |

+

"hu": "hungarian",

|

| 41 |

+

"ta": "tamil",

|

| 42 |

+

"no": "norwegian",

|

| 43 |

+

"th": "thai",

|

| 44 |

+

"ur": "urdu",

|

| 45 |

+

"hr": "croatian",

|

| 46 |

+

"bg": "bulgarian",

|

| 47 |

+

"lt": "lithuanian",

|

| 48 |

+

"la": "latin",

|

| 49 |

+

"mi": "maori",

|

| 50 |

+

"ml": "malayalam",

|

| 51 |

+

"cy": "welsh",

|

| 52 |

+

"sk": "slovak",

|

| 53 |

+

"te": "telugu",

|

| 54 |

+

"fa": "persian",

|

| 55 |

+

"lv": "latvian",

|

| 56 |

+

"bn": "bengali",

|

| 57 |

+

"sr": "serbian",

|

| 58 |

+

"az": "azerbaijani",

|

| 59 |

+

"sl": "slovenian",

|

| 60 |

+

"kn": "kannada",

|

| 61 |

+

"et": "estonian",

|

| 62 |

+

"mk": "macedonian",

|

| 63 |

+

"br": "breton",

|

| 64 |

+

"eu": "basque",

|

| 65 |

+

"is": "icelandic",

|

| 66 |

+

"hy": "armenian",

|

| 67 |

+

"ne": "nepali",

|

| 68 |

+

"mn": "mongolian",

|

| 69 |

+

"bs": "bosnian",

|

| 70 |

+

"kk": "kazakh",

|

| 71 |

+

"sq": "albanian",

|

| 72 |

+

"sw": "swahili",

|

| 73 |

+

"gl": "galician",

|

| 74 |

+

"mr": "marathi",

|

| 75 |

+

"pa": "punjabi",

|

| 76 |

+

"si": "sinhala",

|

| 77 |

+

"km": "khmer",

|

| 78 |

+

"sn": "shona",

|

| 79 |

+

"yo": "yoruba",

|

| 80 |

+

"so": "somali",

|

| 81 |

+

"af": "afrikaans",

|

| 82 |

+

"oc": "occitan",

|

| 83 |

+

"ka": "georgian",

|

| 84 |

+

"be": "belarusian",

|

| 85 |

+

"tg": "tajik",

|

| 86 |

+

"sd": "sindhi",

|

| 87 |

+

"gu": "gujarati",

|

| 88 |

+

"am": "amharic",

|

| 89 |

+

"yi": "yiddish",

|

| 90 |

+

"lo": "lao",

|

| 91 |

+

"uz": "uzbek",

|

| 92 |

+

"fo": "faroese",

|

| 93 |

+

"ht": "haitian creole",

|

| 94 |

+

"ps": "pashto",

|

| 95 |

+

"tk": "turkmen",

|

| 96 |

+

"nn": "nynorsk",

|

| 97 |

+

"mt": "maltese",

|

| 98 |

+

"sa": "sanskrit",

|

| 99 |

+

"lb": "luxembourgish",

|

| 100 |

+

"my": "myanmar",

|

| 101 |

+

"bo": "tibetan",

|

| 102 |

+

"tl": "tagalog",

|

| 103 |

+

"mg": "malagasy",

|

| 104 |

+

"as": "assamese",

|

| 105 |

+

"tt": "tatar",

|

| 106 |

+

"haw": "hawaiian",

|

| 107 |

+

"ln": "lingala",

|

| 108 |

+

"ha": "hausa",

|

| 109 |

+

"ba": "bashkir",

|

| 110 |

+

"jw": "javanese",

|

| 111 |

+

"su": "sundanese",

|

| 112 |

+

}

|

| 113 |

+

self.model = whisper.load_model(model_path)

|

| 114 |

+

|

| 115 |

+

@calculate_time

|

| 116 |

+

def transcribe(self, audio_file):

|

| 117 |

+

result = self.model.transcribe(audio_file)

|

| 118 |

+

return result["text"]

|

| 119 |

+

|

| 120 |

+

|

| 121 |

+

if __name__ == "__main__":

|

| 122 |

+

import os

|

| 123 |

+

# 创建ASR对象并进行语音识别

|

| 124 |

+

model_path = "./Whisper/tiny.pt" # 模型路径

|

| 125 |

+

audio_file = "output.wav" # 音频文件路径

|

| 126 |

+

if not os.path.exists(audio_file):

|

| 127 |

+

os.system('edge-tts --text "hello" --write-media output.wav')

|

| 128 |

+

asr = WhisperASR(model_path)

|

| 129 |

+

print(asr.transcribe(audio_file))

|

ASR/__init__.py

ADDED

|

@@ -0,0 +1,4 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

from .Whisper import WhisperASR

|

| 2 |

+

from .FunASR import FunASR

|

| 3 |

+

|

| 4 |

+

__all__ = ['WhisperASR', 'FunASR']

|

ASR/requirements_funasr.txt

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

funasr

|

| 2 |

+

modelscope

|

| 3 |

+

# rotary_embedding_torch

|

AutoDL部署.md

ADDED

|

@@ -0,0 +1,215 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# 在AutoDL平台部署Linly-Talker (0基础小白超详细教程)

|

| 2 |

+

|

| 3 |

+

<!-- TOC -->

|

| 4 |

+

|

| 5 |

+

- [在AutoDL平台部署Linly-Talker 0基础小白超详细教程](#%E5%9C%A8autodl%E5%B9%B3%E5%8F%B0%E9%83%A8%E7%BD%B2linly-talker-0%E5%9F%BA%E7%A1%80%E5%B0%8F%E7%99%BD%E8%B6%85%E8%AF%A6%E7%BB%86%E6%95%99%E7%A8%8B)

|

| 6 |

+

- [快速上手直接使用镜像以下安装操作全免](#%E5%BF%AB%E9%80%9F%E4%B8%8A%E6%89%8B%E7%9B%B4%E6%8E%A5%E4%BD%BF%E7%94%A8%E9%95%9C%E5%83%8F%E4%BB%A5%E4%B8%8B%E5%AE%89%E8%A3%85%E6%93%8D%E4%BD%9C%E5%85%A8%E5%85%8D)

|

| 7 |

+

- [一、注册AutoDL](#%E4%B8%80%E6%B3%A8%E5%86%8Cautodl)

|

| 8 |

+

- [二、创建实例](#%E4%BA%8C%E5%88%9B%E5%BB%BA%E5%AE%9E%E4%BE%8B)

|

| 9 |

+

- [登录AutoDL,进入算力市场,选择机器](#%E7%99%BB%E5%BD%95autodl%E8%BF%9B%E5%85%A5%E7%AE%97%E5%8A%9B%E5%B8%82%E5%9C%BA%E9%80%89%E6%8B%A9%E6%9C%BA%E5%99%A8)

|

| 10 |

+

- [配置基础镜像](#%E9%85%8D%E7%BD%AE%E5%9F%BA%E7%A1%80%E9%95%9C%E5%83%8F)

|

| 11 |

+

- [无卡模式开机](#%E6%97%A0%E5%8D%A1%E6%A8%A1%E5%BC%8F%E5%BC%80%E6%9C%BA)

|

| 12 |

+

- [三、部署环境](#%E4%B8%89%E9%83%A8%E7%BD%B2%E7%8E%AF%E5%A2%83)

|

| 13 |

+

- [进入终端](#%E8%BF%9B%E5%85%A5%E7%BB%88%E7%AB%AF)

|

| 14 |

+

- [下载代码文件](#%E4%B8%8B%E8%BD%BD%E4%BB%A3%E7%A0%81%E6%96%87%E4%BB%B6)

|

| 15 |

+

- [下载模型文件](#%E4%B8%8B%E8%BD%BD%E6%A8%A1%E5%9E%8B%E6%96%87%E4%BB%B6)

|

| 16 |

+

- [四、Linly-Talker项目](#%E5%9B%9Blinly-talker%E9%A1%B9%E7%9B%AE)

|

| 17 |

+

- [环境安装](#%E7%8E%AF%E5%A2%83%E5%AE%89%E8%A3%85)

|

| 18 |

+

- [端口设置](#%E7%AB%AF%E5%8F%A3%E8%AE%BE%E7%BD%AE)

|

| 19 |

+

- [有卡开机](#%E6%9C%89%E5%8D%A1%E5%BC%80%E6%9C%BA)

|

| 20 |

+

- [运行网页版对话webui](#%E8%BF%90%E8%A1%8C%E7%BD%91%E9%A1%B5%E7%89%88%E5%AF%B9%E8%AF%9Dwebui)

|

| 21 |

+

- [端口映射](#%E7%AB%AF%E5%8F%A3%E6%98%A0%E5%B0%84)

|

| 22 |

+

- [体验Linly-Talker(成功)](#%E4%BD%93%E9%AA%8Clinly-talker%E6%88%90%E5%8A%9F)

|

| 23 |

+

|

| 24 |

+

<!-- /TOC -->

|

| 25 |

+

|

| 26 |

+

|

| 27 |

+

|

| 28 |

+

## 快速上手直接使用镜像(以下安装操作全免)

|

| 29 |

+

|

| 30 |

+

若使用我设定好的镜像,可以直接运行即可,不需要安装环境,直接运行webui.py或者是app_talk.py即可体验,不需要安装任何环境,可直接跳到4.4即可

|

| 31 |

+

|

| 32 |

+

访问后在自定义设置里面打开端口,默认是6006端口,直接使用运行即可!

|

| 33 |

+

|

| 34 |

+

```bash

|

| 35 |

+

python webui.py

|

| 36 |

+

python app_talk.py

|

| 37 |

+

```

|

| 38 |

+

|

| 39 |

+

环境模型都安装好了,直接使用即可,镜像地址在:[https://www.codewithgpu.com/i/Kedreamix/Linly-Talker/Kedreamix-Linly-Talker](https://www.codewithgpu.com/i/Kedreamix/Linly-Talker/Kedreamix-Linly-Talker),感谢大家的支持

|

| 40 |

+

|

| 41 |

+

|

| 42 |

+

|

| 43 |

+

## 一、注册AutoDL

|

| 44 |

+

|

| 45 |

+

[AutoDL官网](https://www.autodl.com/home) 注册账户好并充值,自己选择机器,我觉得如果正常跑一下,5元已经够了

|

| 46 |

+

|

| 47 |

+

|

| 48 |

+

|

| 49 |

+

## 二、创建实例

|

| 50 |

+

|

| 51 |

+

### 2.1 登录AutoDL,进入算力市场,选择机器

|

| 52 |

+

|

| 53 |

+

这一部分实际上我觉得12g都OK的,无非是速度问题而已

|

| 54 |

+

|

| 55 |

+

|

| 56 |

+

|

| 57 |

+

|

| 58 |

+

|

| 59 |

+

### 2.2 配置基础镜像

|

| 60 |

+

|

| 61 |

+

选择镜像,最好选择2.0以上可以体验克隆声音功能,其他无所谓

|

| 62 |

+

|

| 63 |

+

|

| 64 |

+

|

| 65 |

+

|

| 66 |

+

|

| 67 |

+

### 2.3 无卡模式开机

|

| 68 |

+

|

| 69 |

+

创建成功后为了省钱先关机,然后使用无卡模式开机。

|

| 70 |

+

无卡模式一个小时只需要0.1元,比较适合部署环境。

|

| 71 |

+

|

| 72 |

+

|

| 73 |

+

|

| 74 |

+

## 三、部署环境

|

| 75 |

+

|

| 76 |

+

### 3.1 进入终端

|

| 77 |

+

|

| 78 |

+

打开jupyterLab,进入数据盘(autodl-tmp),打开终端,将Linly-Talker模型下载到数据盘中。

|

| 79 |

+

|

| 80 |

+

|

| 81 |

+

|

| 82 |

+

|

| 83 |

+

|

| 84 |

+

### 3.2 下载代码文件

|

| 85 |

+

|

| 86 |

+

根据Github上的说明,使用命令行下载模型文件和代码文件,利用学术加速会快一点

|

| 87 |

+

|

| 88 |

+

```bash

|

| 89 |

+

# 开启学术镜像,更快的clone代码 参考 https://www.autodl.com/docs/network_turbo/

|

| 90 |

+

source /etc/network_turbo

|

| 91 |

+

|

| 92 |

+

cd /root/autodl-tmp/

|

| 93 |

+

# 下载代码

|

| 94 |

+

git clone https://github.com/Kedreamix/Linly-Talker.git --depth 1

|

| 95 |

+

|

| 96 |

+

# 取消学术加速

|

| 97 |

+

unset http_proxy && unset https_proxy

|

| 98 |

+

```

|

| 99 |

+

|

| 100 |

+

|

| 101 |

+

|

| 102 |

+

### 3.3 下载模型文件

|

| 103 |

+

|

| 104 |

+

我制作一个脚本可以完成下述所有模型的下载,无需用户过多操作。这种方式适合网络稳定的情况,并且特别适合 Linux 用户。对于 Windows 用户,也可以使用 Git 来下载模型。如果网络环境不稳定,用户可以选择使用手动下载方法,或者尝试运行 Shell 脚本来完成下载。脚本具有以下功能。

|

| 105 |

+

|

| 106 |

+

1. **选择下载方式**: 用户可以选择从三种不同的源下载模型:ModelScope、Huggingface 或 Huggingface 镜像站点。

|

| 107 |

+

2. **下载模型**: 根据用户的选择,执行相应的下载命令。

|

| 108 |

+

3. **移动模型文件**: 下载完成后,将模型文件移动到指定的目录。

|

| 109 |

+

4. **错误处理**: 在每一步操作中加入了错误检查,如果操作失败,脚本会输出错误���息并停止执行。

|

| 110 |

+

|

| 111 |

+

选择使用`modelscope`来下载会快一点,不需要开学术加速,记得首先需要先安装modelscope库

|

| 112 |

+

|

| 113 |

+

```sh

|

| 114 |

+

# 下载modelscope

|

| 115 |

+

pip install modelscope -i https://pypi.tuna.tsinghua.edu.cn/simple

|

| 116 |

+

cd /root/autodl-tmp/Linly-Talker

|

| 117 |

+

sh scripts/download_models.sh

|

| 118 |

+

```

|

| 119 |

+

|

| 120 |

+

|

| 121 |

+

|

| 122 |

+

等待一段时间下载完以后,脚本会自动移动到对应的目录

|

| 123 |

+

|

| 124 |

+

|

| 125 |

+

|

| 126 |

+

## 四、Linly-Talker项目

|

| 127 |

+

|

| 128 |

+

### 4.1 环境安装

|

| 129 |

+

|

| 130 |

+

进入代码路径,进行安装环境,由于选了镜像是含有pytorch的,所以只需要进行安装其他依赖即可,可能需要花一定的时间,建议直接使用安装好的镜像

|

| 131 |

+

|

| 132 |

+

```bash

|

| 133 |

+

cd /root/autodl-tmp/Linly-Talker

|

| 134 |

+

|

| 135 |

+

conda install ffmpeg==4.2.2 # ffmpeg==4.2.2

|

| 136 |

+

|

| 137 |

+

# 升级pip

|

| 138 |

+

python -m pip install --upgrade pip

|

| 139 |

+

# 更换 pypi 源加速库的安装

|

| 140 |

+

pip config set global.index-url https://pypi.tuna.tsinghua.edu.cn/simple

|

| 141 |

+

|

| 142 |

+

pip install tb-nightly -i https://mirrors.aliyun.com/pypi/simple

|

| 143 |

+

pip install -r requirements_webui.txt

|

| 144 |

+

|

| 145 |

+

# 安装有关musetalk依赖

|

| 146 |

+

pip install --no-cache-dir -U openmim

|

| 147 |

+

mim install mmengine

|

| 148 |

+

mim install "mmcv>=2.0.1"

|

| 149 |

+

mim install "mmdet>=3.1.0"

|

| 150 |

+

mim install "mmpose>=1.1.0"

|

| 151 |

+

|

| 152 |

+

# 安装NeRF-based依赖,可能问题较多,可以先放弃

|

| 153 |

+

# 亲测需要有卡开机后再跑这个pytorch3d,需要一定的内存来编译

|

| 154 |

+

pip install "git+https://github.com/facebookresearch/pytorch3d.git"

|

| 155 |

+

|

| 156 |

+

# 若pyaudio出现问题,可安装对应依赖

|

| 157 |

+

sudo apt-get update

|

| 158 |

+

sudo apt-get install libasound-dev portaudio19-dev libportaudio2 libportaudiocpp0

|

| 159 |

+

pip install -r TFG/requirements_nerf.txt

|

| 160 |

+

```

|

| 161 |

+

|

| 162 |

+

|

| 163 |

+

|

| 164 |

+

### 4.2 有卡开机

|

| 165 |

+

|

| 166 |

+

进入autodl容器实例界面,执行关机操作,然后进行有卡开机,开机后打开jupyterLab。

|

| 167 |

+

|

| 168 |

+

查看配置

|

| 169 |

+

|

| 170 |

+

```bash

|

| 171 |

+

nvidia-smi

|

| 172 |

+

```

|

| 173 |

+

|

| 174 |

+

|

| 175 |

+

|

| 176 |

+

|

| 177 |

+

|

| 178 |

+

### 4.3 运行网页版对话webui

|

| 179 |

+

|

| 180 |

+

需要有卡模式开机,执行下边命令,这里面就跟代码是一模一样的了

|

| 181 |

+

|

| 182 |

+

```bash

|

| 183 |

+

cd /root/autodl-tmp/Linly-Talker

|

| 184 |

+

# 第一次运行可能会下载部分nltk,可以使用一下学术加速

|

| 185 |

+

source /etc/network_turbo

|

| 186 |

+

python webui.py

|

| 187 |

+

```

|

| 188 |

+

|

| 189 |

+

|

| 190 |

+

|

| 191 |

+

### 4.4 端口映射

|

| 192 |

+

|

| 193 |

+

这可以直接打开autodl的自定义服务,默认是6006端口,我们已经设置了,所以直接使用即可

|

| 194 |

+

|

| 195 |

+

|

| 196 |

+

|

| 197 |

+

另外还有一种端口映射方式,是通过输入ssh账密实现的,步骤是一样的

|

| 198 |

+

|

| 199 |

+

> ssh端口映射工具:windows:[https://autodl-public.ks3-cn-beijing.ksyuncs.com/tool/AutoDL-SSH-Tools.zip](https://autodl-public.ks3-cn-beijing.ksyuncs.com/tool/AutoDL-SSH-Tools.zip)

|

| 200 |

+

|

| 201 |

+

### 4.5 体验Linly-Talker(成功)

|

| 202 |

+

|

| 203 |

+

点开网页,即可正确执行Linly-Talker,这一部分就跟视频一模一样了

|

| 204 |

+

|

| 205 |

+

|

| 206 |

+

|

| 207 |

+

|

| 208 |

+

|

| 209 |

+

|

| 210 |

+

|

| 211 |

+

|

| 212 |

+

|

| 213 |

+

**!!!注意:不用了,一定要去控制台=》容器实例,把镜像实例关机,它是按时收费的,不关机会一直扣费的。**

|

| 214 |

+

|

| 215 |

+

**建议选北京区的,稍微便宜一些。可以晚上部署,网速快,便宜的GPU也充足。白天部署,北京区的GPU容易没有。**

|

ChatTTS/.gitignore

ADDED

|

@@ -0,0 +1,163 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# Byte-compiled / optimized / DLL files

|

| 2 |

+

__pycache__/

|

| 3 |

+

*.py[cod]

|

| 4 |

+

*$py.class

|

| 5 |

+

*.ckpt

|

| 6 |

+

# C extensions

|

| 7 |

+

*.so

|

| 8 |

+

*.pt

|

| 9 |

+

|

| 10 |

+

# Distribution / packaging

|

| 11 |

+

.Python

|

| 12 |

+

outputs/

|

| 13 |

+

build/

|

| 14 |

+

develop-eggs/

|

| 15 |

+

dist/

|

| 16 |

+

downloads/

|

| 17 |

+

eggs/

|

| 18 |

+

.eggs/

|

| 19 |

+

lib/

|

| 20 |

+

lib64/

|

| 21 |

+

parts/

|

| 22 |

+

sdist/

|

| 23 |

+

var/

|

| 24 |

+

wheels/

|

| 25 |

+

share/python-wheels/

|

| 26 |

+

*.egg-info/

|

| 27 |

+

asset/*

|

| 28 |

+

.installed.cfg

|

| 29 |

+

*.egg

|

| 30 |

+

MANIFEST

|

| 31 |

+

|

| 32 |

+

# PyInstaller

|

| 33 |

+

# Usually these files are written by a python script from a template

|

| 34 |

+

# before PyInstaller builds the exe, so as to inject date/other infos into it.

|

| 35 |

+

*.manifest

|

| 36 |

+

*.spec

|

| 37 |

+

|

| 38 |

+

# Installer logs

|

| 39 |

+

pip-log.txt

|

| 40 |

+

pip-delete-this-directory.txt

|

| 41 |

+

|

| 42 |

+

# Unit test / coverage reports

|

| 43 |

+

htmlcov/

|

| 44 |

+

.tox/

|

| 45 |

+

.nox/

|

| 46 |

+

.coverage

|

| 47 |

+

.coverage.*

|

| 48 |

+

.cache

|

| 49 |

+

nosetests.xml

|

| 50 |

+

coverage.xml

|

| 51 |

+

*.cover

|

| 52 |

+

*.py,cover

|

| 53 |

+

.hypothesis/

|

| 54 |

+

.pytest_cache/

|

| 55 |

+

cover/

|

| 56 |

+

|

| 57 |

+

# Translations

|

| 58 |

+

*.mo

|

| 59 |

+

*.pot

|

| 60 |

+

|

| 61 |

+

# Django stuff:

|

| 62 |

+

*.log

|

| 63 |

+

local_settings.py

|

| 64 |

+

db.sqlite3

|

| 65 |

+

db.sqlite3-journal

|

| 66 |

+

|

| 67 |

+

# Flask stuff:

|

| 68 |

+

instance/

|

| 69 |

+

.webassets-cache

|

| 70 |

+

|

| 71 |

+

# Scrapy stuff:

|

| 72 |

+

.scrapy

|

| 73 |

+

|

| 74 |

+

# Sphinx documentation

|

| 75 |

+

docs/_build/

|

| 76 |

+

|

| 77 |

+

# PyBuilder

|

| 78 |

+

.pybuilder/

|

| 79 |

+

target/

|

| 80 |

+

|

| 81 |

+

# Jupyter Notebook

|

| 82 |

+

.ipynb_checkpoints

|

| 83 |

+

|

| 84 |

+

# IPython

|

| 85 |

+

profile_default/

|

| 86 |

+

ipython_config.py

|

| 87 |

+

|

| 88 |

+

# pyenv

|

| 89 |

+

# For a library or package, you might want to ignore these files since the code is

|

| 90 |

+

# intended to run in multiple environments; otherwise, check them in:

|

| 91 |

+

# .python-version

|

| 92 |

+

|

| 93 |

+

# pipenv

|

| 94 |

+

# According to pypa/pipenv#598, it is recommended to include Pipfile.lock in version control.

|

| 95 |

+

# However, in case of collaboration, if having platform-specific dependencies or dependencies

|

| 96 |

+

# having no cross-platform support, pipenv may install dependencies that don't work, or not

|

| 97 |

+

# install all needed dependencies.

|

| 98 |

+

#Pipfile.lock

|

| 99 |

+

|

| 100 |

+

# poetry

|

| 101 |

+

# Similar to Pipfile.lock, it is generally recommended to include poetry.lock in version control.

|

| 102 |

+

# This is especially recommended for binary packages to ensure reproducibility, and is more

|

| 103 |

+

# commonly ignored for libraries.

|

| 104 |

+

# https://python-poetry.org/docs/basic-usage/#commit-your-poetrylock-file-to-version-control

|

| 105 |

+

#poetry.lock

|

| 106 |

+

|

| 107 |

+

# pdm

|

| 108 |

+

# Similar to Pipfile.lock, it is generally recommended to include pdm.lock in version control.

|

| 109 |

+

#pdm.lock

|

| 110 |

+

# pdm stores project-wide configurations in .pdm.toml, but it is recommended to not include it

|

| 111 |

+

# in version control.

|

| 112 |

+

# https://pdm.fming.dev/#use-with-ide

|

| 113 |

+

.pdm.toml

|

| 114 |

+

|

| 115 |

+

# PEP 582; used by e.g. github.com/David-OConnor/pyflow and github.com/pdm-project/pdm

|

| 116 |

+

__pypackages__/

|

| 117 |

+

|

| 118 |

+

# Celery stuff

|

| 119 |

+

celerybeat-schedule

|

| 120 |

+

celerybeat.pid

|

| 121 |

+

|

| 122 |

+

# SageMath parsed files

|

| 123 |

+

*.sage.py

|

| 124 |

+

|

| 125 |

+

# Environments

|

| 126 |

+

.env

|

| 127 |

+

.venv

|

| 128 |

+

env/

|

| 129 |

+

venv/

|

| 130 |

+

ENV/

|

| 131 |

+

env.bak/

|

| 132 |

+

venv.bak/

|

| 133 |

+

|

| 134 |

+

# Spyder project settings

|

| 135 |

+

.spyderproject

|

| 136 |

+

.spyproject

|

| 137 |

+

|

| 138 |

+

# Rope project settings

|

| 139 |

+

.ropeproject

|

| 140 |

+

|

| 141 |

+

# mkdocs documentation

|

| 142 |

+

/site

|

| 143 |

+

|

| 144 |

+

# mypy

|

| 145 |

+

.mypy_cache/

|

| 146 |

+

.dmypy.json

|

| 147 |

+

dmypy.json

|

| 148 |

+

|

| 149 |

+

# Pyre type checker

|

| 150 |

+

.pyre/

|

| 151 |

+

|

| 152 |

+

# pytype static type analyzer

|

| 153 |

+

.pytype/

|

| 154 |

+

|

| 155 |

+

# Cython debug symbols

|

| 156 |

+

cython_debug/

|

| 157 |

+

|

| 158 |

+

# PyCharm

|

| 159 |

+

# JetBrains specific template is maintained in a separate JetBrains.gitignore that can

|

| 160 |

+

# be found at https://github.com/github/gitignore/blob/main/Global/JetBrains.gitignore

|

| 161 |

+

# and can be added to the global gitignore or merged into this file. For a more nuclear

|

| 162 |

+

# option (not recommended) you can uncomment the following to ignore the entire idea folder.

|

| 163 |

+

#.idea/

|

ChatTTS/ChatTTS/__init__.py

ADDED

|

@@ -0,0 +1 @@

|

|

|

|

|

|

|

| 1 |

+

from .core import Chat

|

ChatTTS/ChatTTS/core.py

ADDED

|

@@ -0,0 +1,200 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

|

| 2 |

+

import os

|

| 3 |

+

import logging

|

| 4 |

+

from functools import partial

|

| 5 |

+

from omegaconf import OmegaConf

|

| 6 |

+

|

| 7 |

+

import torch

|

| 8 |

+

from vocos import Vocos

|

| 9 |

+

from .model.dvae import DVAE

|

| 10 |

+

from .model.gpt import GPT_warpper

|

| 11 |

+

from .utils.gpu_utils import select_device

|

| 12 |

+

from .utils.infer_utils import count_invalid_characters, detect_language, apply_character_map, apply_half2full_map

|

| 13 |

+

from .utils.io_utils import get_latest_modified_file

|

| 14 |

+

from .infer.api import refine_text, infer_code

|

| 15 |

+

|

| 16 |

+

from huggingface_hub import snapshot_download

|

| 17 |

+

|

| 18 |

+

logging.basicConfig(level = logging.INFO)

|

| 19 |

+

|

| 20 |

+

|

| 21 |

+

class Chat:

|

| 22 |

+

def __init__(self, ):

|

| 23 |

+

self.pretrain_models = {}

|

| 24 |

+

self.normalizer = {}

|

| 25 |

+

self.logger = logging.getLogger(__name__)

|

| 26 |

+

|

| 27 |

+

def check_model(self, level = logging.INFO, use_decoder = False):

|

| 28 |

+

not_finish = False

|

| 29 |

+

check_list = ['vocos', 'gpt', 'tokenizer']

|

| 30 |

+

|

| 31 |

+

if use_decoder:

|

| 32 |

+

check_list.append('decoder')

|

| 33 |

+

else:

|

| 34 |

+

check_list.append('dvae')

|

| 35 |

+

|

| 36 |

+

for module in check_list:

|

| 37 |

+

if module not in self.pretrain_models:

|

| 38 |

+

self.logger.log(logging.WARNING, f'{module} not initialized.')

|

| 39 |

+

not_finish = True

|

| 40 |

+

|

| 41 |

+

if not not_finish:

|

| 42 |

+

self.logger.log(level, f'All initialized.')

|

| 43 |

+

|

| 44 |

+

return not not_finish

|

| 45 |

+

|

| 46 |

+

def load_models(self, source='huggingface', force_redownload=False, local_path='<LOCAL_PATH>', **kwargs):

|

| 47 |

+

if source == 'huggingface':

|

| 48 |

+

hf_home = os.getenv('HF_HOME', os.path.expanduser("~/.cache/huggingface"))

|

| 49 |

+

try:

|

| 50 |

+

download_path = get_latest_modified_file(os.path.join(hf_home, 'hub/models--2Noise--ChatTTS/snapshots'))

|

| 51 |

+

except:

|

| 52 |

+

download_path = None

|

| 53 |

+

if download_path is None or force_redownload:

|

| 54 |

+

self.logger.log(logging.INFO, f'Download from HF: https://huggingface.co/2Noise/ChatTTS')

|

| 55 |

+

download_path = snapshot_download(repo_id="2Noise/ChatTTS", allow_patterns=["*.pt", "*.yaml"])

|

| 56 |

+

else:

|

| 57 |

+

self.logger.log(logging.INFO, f'Load from cache: {download_path}')

|

| 58 |

+

elif source == 'local':

|

| 59 |

+

self.logger.log(logging.INFO, f'Load from local: {local_path}')

|

| 60 |

+

download_path = local_path

|

| 61 |

+

|

| 62 |

+

self._load(**{k: os.path.join(download_path, v) for k, v in OmegaConf.load(os.path.join(download_path, 'config', 'path.yaml')).items()}, **kwargs)

|

| 63 |

+

|

| 64 |

+

def _load(

|

| 65 |

+

self,

|

| 66 |

+

vocos_config_path: str = None,

|

| 67 |

+

vocos_ckpt_path: str = None,

|

| 68 |

+

dvae_config_path: str = None,

|

| 69 |

+

dvae_ckpt_path: str = None,

|

| 70 |

+

gpt_config_path: str = None,

|

| 71 |

+

gpt_ckpt_path: str = None,

|

| 72 |

+

decoder_config_path: str = None,

|

| 73 |

+

decoder_ckpt_path: str = None,

|

| 74 |

+

tokenizer_path: str = None,

|

| 75 |

+

device: str = None,

|

| 76 |

+

compile: bool = True,

|

| 77 |

+

):

|

| 78 |

+

if not device:

|

| 79 |

+

device = select_device(4096)

|

| 80 |

+

self.logger.log(logging.INFO, f'use {device}')

|

| 81 |

+

|

| 82 |

+

if vocos_config_path:

|

| 83 |

+

vocos = Vocos.from_hparams(vocos_config_path).to(device).eval()

|

| 84 |

+

assert vocos_ckpt_path, 'vocos_ckpt_path should not be None'

|

| 85 |

+

vocos.load_state_dict(torch.load(vocos_ckpt_path))

|

| 86 |

+

self.pretrain_models['vocos'] = vocos

|

| 87 |

+

self.logger.log(logging.INFO, 'vocos loaded.')

|

| 88 |

+

|

| 89 |

+

if dvae_config_path:

|

| 90 |

+

cfg = OmegaConf.load(dvae_config_path)

|

| 91 |

+

dvae = DVAE(**cfg).to(device).eval()

|

| 92 |

+

assert dvae_ckpt_path, 'dvae_ckpt_path should not be None'

|

| 93 |

+

dvae.load_state_dict(torch.load(dvae_ckpt_path, map_location='cpu'))

|

| 94 |

+

self.pretrain_models['dvae'] = dvae

|

| 95 |

+

self.logger.log(logging.INFO, 'dvae loaded.')

|

| 96 |

+

|

| 97 |

+

if gpt_config_path:

|

| 98 |

+

cfg = OmegaConf.load(gpt_config_path)

|

| 99 |

+

gpt = GPT_warpper(**cfg).to(device).eval()

|

| 100 |

+

assert gpt_ckpt_path, 'gpt_ckpt_path should not be None'

|

| 101 |

+

gpt.load_state_dict(torch.load(gpt_ckpt_path, map_location='cpu'))

|

| 102 |

+

if compile and 'cuda' in str(device):

|

| 103 |

+

gpt.gpt.forward = torch.compile(gpt.gpt.forward, backend='inductor', dynamic=True)

|

| 104 |

+

self.pretrain_models['gpt'] = gpt

|

| 105 |

+

spk_stat_path = os.path.join(os.path.dirname(gpt_ckpt_path), 'spk_stat.pt')

|

| 106 |

+

assert os.path.exists(spk_stat_path), f'Missing spk_stat.pt: {spk_stat_path}'

|

| 107 |

+

self.pretrain_models['spk_stat'] = torch.load(spk_stat_path).to(device)

|

| 108 |

+

self.logger.log(logging.INFO, 'gpt loaded.')

|

| 109 |

+

|

| 110 |

+

if decoder_config_path:

|

| 111 |

+

cfg = OmegaConf.load(decoder_config_path)

|

| 112 |

+

decoder = DVAE(**cfg).to(device).eval()

|

| 113 |

+

assert decoder_ckpt_path, 'decoder_ckpt_path should not be None'

|

| 114 |

+

decoder.load_state_dict(torch.load(decoder_ckpt_path, map_location='cpu'))

|

| 115 |

+

self.pretrain_models['decoder'] = decoder

|

| 116 |

+

self.logger.log(logging.INFO, 'decoder loaded.')

|

| 117 |

+

|

| 118 |

+

if tokenizer_path:

|

| 119 |

+

tokenizer = torch.load(tokenizer_path, map_location='cpu')

|

| 120 |

+

tokenizer.padding_side = 'left'

|

| 121 |

+

self.pretrain_models['tokenizer'] = tokenizer

|

| 122 |

+

self.logger.log(logging.INFO, 'tokenizer loaded.')

|

| 123 |

+

|

| 124 |

+

self.check_model()

|

| 125 |

+

|

| 126 |

+

def infer(

|

| 127 |

+

self,

|

| 128 |

+

text,

|

| 129 |

+

skip_refine_text=False,

|

| 130 |

+

refine_text_only=False,

|

| 131 |

+

params_refine_text={},

|

| 132 |

+

params_infer_code={'prompt':'[speed_5]'},

|

| 133 |

+

use_decoder=True,

|

| 134 |

+

do_text_normalization=True,

|

| 135 |

+

lang=None,

|

| 136 |

+

):

|

| 137 |

+

|

| 138 |

+

assert self.check_model(use_decoder=use_decoder)

|

| 139 |

+

|

| 140 |

+

if not isinstance(text, list):

|

| 141 |

+

text = [text]

|

| 142 |

+

|

| 143 |

+

if do_text_normalization:

|

| 144 |

+

for i, t in enumerate(text):

|

| 145 |

+

_lang = detect_language(t) if lang is None else lang

|

| 146 |

+

self.init_normalizer(_lang)

|

| 147 |

+

text[i] = self.normalizer[_lang](t)

|

| 148 |

+

if _lang == 'zh':

|

| 149 |

+

text[i] = apply_half2full_map(text[i])

|

| 150 |

+

|

| 151 |

+

for i, t in enumerate(text):

|

| 152 |

+

invalid_characters = count_invalid_characters(t)

|

| 153 |

+

if len(invalid_characters):

|

| 154 |

+

self.logger.log(logging.WARNING, f'Invalid characters found! : {invalid_characters}')

|

| 155 |

+

text[i] = apply_character_map(t)

|

| 156 |

+

|

| 157 |

+

if not skip_refine_text:

|

| 158 |

+

text_tokens = refine_text(self.pretrain_models, text, **params_refine_text)['ids']

|

| 159 |

+

text_tokens = [i[i < self.pretrain_models['tokenizer'].convert_tokens_to_ids('[break_0]')] for i in text_tokens]

|

| 160 |

+

text = self.pretrain_models['tokenizer'].batch_decode(text_tokens)

|

| 161 |

+

if refine_text_only:

|

| 162 |

+

return text

|

| 163 |

+

|

| 164 |

+

text = [params_infer_code.get('prompt', '') + i for i in text]

|

| 165 |

+

params_infer_code.pop('prompt', '')

|

| 166 |

+

result = infer_code(self.pretrain_models, text, **params_infer_code, return_hidden=use_decoder)

|

| 167 |

+

|

| 168 |

+

if use_decoder:

|

| 169 |

+

mel_spec = [self.pretrain_models['decoder'](i[None].permute(0,2,1)) for i in result['hiddens']]

|

| 170 |

+

else:

|

| 171 |