Improve language tag

Browse filesHi! As the model is multilingual, this is a PR to add other languages than English to the language tag to improve the referencing. Note that 29 languages are announced in the README, but only 13 are explicitly listed. I was therefore only able to add these 13 languages.

README.md

CHANGED

|

@@ -1,677 +1,689 @@

|

|

| 1 |

-

---

|

| 2 |

-

license: mit

|

| 3 |

-

pipeline_tag: image-text-to-text

|

| 4 |

-

library_name: transformers

|

| 5 |

-

base_model:

|

| 6 |

-

|

| 7 |

-

|

| 8 |

-

base_model_relation: merge

|

| 9 |

-

language:

|

| 10 |

-

|

| 11 |

-

|

| 12 |

-

|

| 13 |

-

|

| 14 |

-

|

| 15 |

-

|

| 16 |

-

|

| 17 |

-

|

| 18 |

-

|

| 19 |

-

|

| 20 |

-

|

| 21 |

-

|

| 22 |

-

|

| 23 |

-

|

| 24 |

-

|

| 25 |

-

|

| 26 |

-

|

| 27 |

-

|

| 28 |

-

|

| 29 |

-

|

| 30 |

-

|

| 31 |

-

|

| 32 |

-

|

| 33 |

-

|

| 34 |

-

|

| 35 |

-

|

| 36 |

-

|

| 37 |

-

|

| 38 |

-

|

| 39 |

-

|

| 40 |

-

|

| 41 |

-

|

| 42 |

-

|

| 43 |

-

|

| 44 |

-

|

| 45 |

-

|

| 46 |

-

|

| 47 |

-

|

| 48 |

-

|

| 49 |

-

|

| 50 |

-

|

| 51 |

-

|

| 52 |

-

|

| 53 |

-

|

| 54 |

-

|

| 55 |

-

|

| 56 |

-

|

| 57 |

-

|

| 58 |

-

|

| 59 |

-

|

| 60 |

-

|

| 61 |

-

|

| 62 |

-

|

| 63 |

-

|

| 64 |

-

-

|

| 65 |

-

|

| 66 |

-

|

| 67 |

-

|

| 68 |

-

|

| 69 |

-

|

| 70 |

-

###

|

| 71 |

-

|

| 72 |

-

|

| 73 |

-

|

| 74 |

-

|

| 409 |

-

|

| 410 |

-

|

| 411 |

-

|

| 412 |

-

|

| 413 |

-

|

| 414 |

-

|

| 415 |

-

|

| 416 |

-

|

| 417 |

-

|

| 418 |

-

|

| 419 |

-

|

| 420 |

-

|

| 421 |

-

|

| 422 |

-

|

| 423 |

-

|

| 424 |

-

|

| 425 |

-

|

| 426 |

-

|

| 427 |

-

|

| 428 |

-

|

| 429 |

-

|

| 430 |

-

|

| 431 |

-

|

| 432 |

-

|

| 433 |

-

|

| 434 |

-

|

| 435 |

-

|

| 436 |

-

|

| 437 |

-

|

| 438 |

-

|

| 439 |

-

|

| 440 |

-

|

| 441 |

-

|

| 442 |

-

|

| 443 |

-

|

| 444 |

-

|

| 445 |

-

|

| 446 |

-

|

| 447 |

-

|

| 448 |

-

|

| 449 |

-

|

| 450 |

-

|

| 451 |

-

|

| 452 |

-

|

| 453 |

-

|

| 454 |

-

|

| 455 |

-

|

| 456 |

-

|

| 457 |

-

|

| 458 |

-

|

| 459 |

-

|

| 460 |

-

|

| 461 |

-

|

| 462 |

-

|

| 463 |

-

|

| 464 |

-

|

| 465 |

-

|

| 466 |

-

|

| 467 |

-

|

| 468 |

-

|

| 469 |

-

|

| 470 |

-

|

| 471 |

-

|

| 472 |

-

|

| 473 |

-

|

| 474 |

-

|

| 475 |

-

|

| 476 |

-

|

| 477 |

-

|

| 478 |

-

|

| 479 |

-

|

| 480 |

-

|

| 481 |

-

|

| 482 |

-

|

| 483 |

-

|

| 484 |

-

|

| 485 |

-

|

| 486 |

-

|

| 487 |

-

|

| 488 |

-

|

| 489 |

-

|

| 490 |

-

|

| 491 |

-

|

| 492 |

-

|

| 493 |

-

|

| 494 |

-

|

| 495 |

-

|

| 496 |

-

|

| 497 |

-

|

| 498 |

-

|

| 499 |

-

|

| 500 |

-

|

| 501 |

-

|

| 502 |

-

|

| 503 |

-

|

| 504 |

-

|

| 505 |

-

|

| 506 |

-

|

| 507 |

-

|

| 508 |

-

|

| 509 |

-

|

| 510 |

-

|

| 511 |

-

|

| 512 |

-

|

| 513 |

-

|

| 514 |

-

|

| 515 |

-

|

| 516 |

-

|

| 517 |

-

|

| 518 |

-

```

|

| 519 |

-

|

| 520 |

-

|

| 521 |

-

|

| 522 |

-

|

| 523 |

-

|

| 524 |

-

|

| 525 |

-

|

| 526 |

-

|

| 527 |

-

|

| 528 |

-

|

| 529 |

-

|

| 530 |

-

|

| 531 |

-

|

| 532 |

-

|

| 533 |

-

|

| 534 |

-

|

| 535 |

-

|

| 536 |

-

|

| 537 |

-

|

| 538 |

-

|

| 539 |

-

|

| 540 |

-

|

| 541 |

-

|

| 542 |

-

|

| 543 |

-

|

| 544 |

-

|

| 545 |

-

|

| 546 |

-

|

| 547 |

-

|

| 548 |

-

|

| 549 |

-

|

| 550 |

-

|

| 551 |

-

|

| 552 |

-

|

| 553 |

-

|

| 554 |

-

|

| 555 |

-

|

| 556 |

-

|

| 557 |

-

|

| 558 |

-

|

| 559 |

-

|

| 560 |

-

|

| 561 |

-

|

| 562 |

-

|

| 563 |

-

|

| 564 |

-

|

| 565 |

-

|

| 566 |

-

|

| 567 |

-

|

| 568 |

-

|

| 569 |

-

|

| 570 |

-

|

| 571 |

-

|

| 572 |

-

|

| 573 |

-

|

| 574 |

-

|

| 575 |

-

|

| 576 |

-

|

| 577 |

-

|

| 578 |

-

|

| 579 |

-

|

| 580 |

-

|

| 581 |

-

|

| 582 |

-

|

| 583 |

-

|

| 584 |

-

|

| 585 |

-

|

| 586 |

-

|

| 587 |

-

|

| 588 |

-

|

| 589 |

-

|

| 590 |

-

|

| 591 |

-

|

| 592 |

-

|

| 593 |

-

|

| 594 |

-

|

| 595 |

-

|

| 596 |

-

|

| 597 |

-

|

| 598 |

-

|

| 599 |

-

|

| 600 |

-

|

| 601 |

-

|

| 602 |

-

|

| 603 |

-

|

| 604 |

-

|

| 605 |

-

|

| 606 |

-

|

| 607 |

-

|

| 608 |

-

|

| 609 |

-

|

| 610 |

-

|

| 611 |

-

```

|

| 612 |

-

|

| 613 |

-

|

| 614 |

-

|

| 615 |

-

|

| 616 |

-

|

| 617 |

-

```

|

| 618 |

-

|

| 619 |

-

|

| 620 |

-

|

| 621 |

-

|

| 622 |

-

|

| 623 |

-

|

| 624 |

-

|

| 625 |

-

|

| 626 |

-

|

| 627 |

-

|

| 628 |

-

|

| 629 |

-

|

| 630 |

-

|

| 631 |

-

|

| 632 |

-

|

| 633 |

-

|

| 634 |

-

|

| 635 |

-

|

| 636 |

-

|

| 637 |

-

|

| 638 |

-

|

| 639 |

-

|

| 640 |

-

|

| 641 |

-

|

| 642 |

-

|

| 643 |

-

|

| 644 |

-

|

| 645 |

-

|

| 646 |

-

|

| 647 |

-

|

| 648 |

-

|

| 649 |

-

|

| 650 |

-

|

| 651 |

-

|

| 652 |

-

|

| 653 |

-

|

| 654 |

-

|

| 655 |

-

|

| 656 |

-

|

| 657 |

-

|

| 658 |

-

|

| 659 |

-

|

| 660 |

-

|

| 661 |

-

|

| 662 |

-

|

| 663 |

-

|

| 664 |

-

@article{

|

| 665 |

-

title={

|

| 666 |

-

author={Chen, Zhe and Wang, Weiyun and

|

| 667 |

-

journal={arXiv preprint arXiv:

|

| 668 |

-

year={2024}

|

| 669 |

-

}

|

| 670 |

-

@

|

| 671 |

-

title={

|

| 672 |

-

author={

|

| 673 |

-

|

| 674 |

-

|

| 675 |

-

|

| 676 |

-

|

| 677 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

license: mit

|

| 3 |

+

pipeline_tag: image-text-to-text

|

| 4 |

+

library_name: transformers

|

| 5 |

+

base_model:

|

| 6 |

+

- OpenGVLab/InternViT-300M-448px-V2_5

|

| 7 |

+

- Qwen/Qwen2.5-3B-Instruct

|

| 8 |

+

base_model_relation: merge

|

| 9 |

+

language:

|

| 10 |

+

- zho

|

| 11 |

+

- eng

|

| 12 |

+

- fra

|

| 13 |

+

- spa

|

| 14 |

+

- por

|

| 15 |

+

- deu

|

| 16 |

+

- ita

|

| 17 |

+

- rus

|

| 18 |

+

- jpn

|

| 19 |

+

- kor

|

| 20 |

+

- vie

|

| 21 |

+

- tha

|

| 22 |

+

- ara

|

| 23 |

+

tags:

|

| 24 |

+

- internvl

|

| 25 |

+

- custom_code

|

| 26 |

+

datasets:

|

| 27 |

+

- HuggingFaceFV/finevideo

|

| 28 |

+

---

|

| 29 |

+

|

| 30 |

+

# InternVL2_5-4B

|

| 31 |

+

|

| 32 |

+

[\[📂 GitHub\]](https://github.com/OpenGVLab/InternVL) [\[📜 InternVL 1.0\]](https://huggingface.co/papers/2312.14238) [\[📜 InternVL 1.5\]](https://huggingface.co/papers/2404.16821) [\[📜 Mini-InternVL\]](https://arxiv.org/abs/2410.16261) [\[📜 InternVL 2.5\]](https://huggingface.co/papers/2412.05271)

|

| 33 |

+

|

| 34 |

+

[\[🆕 Blog\]](https://internvl.github.io/blog/) [\[🗨️ Chat Demo\]](https://internvl.opengvlab.com/) [\[🤗 HF Demo\]](https://huggingface.co/spaces/OpenGVLab/InternVL) [\[🚀 Quick Start\]](#quick-start) [\[📖 Documents\]](https://internvl.readthedocs.io/en/latest/)

|

| 35 |

+

|

| 36 |

+

<div align="center">

|

| 37 |

+

<img width="500" alt="image" src="https://cdn-uploads.huggingface.co/production/uploads/64006c09330a45b03605bba3/zJsd2hqd3EevgXo6fNgC-.png">

|

| 38 |

+

</div>

|

| 39 |

+

|

| 40 |

+

## Introduction

|

| 41 |

+

|

| 42 |

+

We are excited to introduce **InternVL 2.5**, an advanced multimodal large language model (MLLM) series that builds upon InternVL 2.0, maintaining its core model architecture while introducing significant enhancements in training and testing strategies as well as data quality.

|

| 43 |

+

|

| 44 |

+

|

| 45 |

+

|

| 46 |

+

## InternVL 2.5 Family

|

| 47 |

+

|

| 48 |

+

In the following table, we provide an overview of the InternVL 2.5 series.

|

| 49 |

+

|

| 50 |

+

| Model Name | Vision Part | Language Part | HF Link |

|

| 51 |

+

| :-------------: | :-------------------------------------------------------------------------------------: | :----------------------------------------------------------------------------: | :---------------------------------------------------------: |

|

| 52 |

+

| InternVL2_5-1B | [InternViT-300M-448px-V2_5](https://huggingface.co/OpenGVLab/InternViT-300M-448px-V2_5) | [Qwen2.5-0.5B-Instruct](https://huggingface.co/Qwen/Qwen2.5-0.5B-Instruct) | [🤗 link](https://huggingface.co/OpenGVLab/InternVL2_5-1B) |

|

| 53 |

+

| InternVL2_5-2B | [InternViT-300M-448px-V2_5](https://huggingface.co/OpenGVLab/InternViT-300M-448px-V2_5) | [internlm2_5-1_8b-chat](https://huggingface.co/internlm/internlm2_5-1_8b-chat) | [🤗 link](https://huggingface.co/OpenGVLab/InternVL2_5-2B) |

|

| 54 |

+

| InternVL2_5-4B | [InternViT-300M-448px-V2_5](https://huggingface.co/OpenGVLab/InternViT-300M-448px-V2_5) | [Qwen2.5-3B-Instruct](https://huggingface.co/Qwen/Qwen2.5-3B-Instruct) | [🤗 link](https://huggingface.co/OpenGVLab/InternVL2_5-4B) |

|

| 55 |

+

| InternVL2_5-8B | [InternViT-300M-448px-V2_5](https://huggingface.co/OpenGVLab/InternViT-300M-448px-V2_5) | [internlm2_5-7b-chat](https://huggingface.co/internlm/internlm2_5-7b-chat) | [🤗 link](https://huggingface.co/OpenGVLab/InternVL2_5-8B) |

|

| 56 |

+

| InternVL2_5-26B | [InternViT-6B-448px-V2_5](https://huggingface.co/OpenGVLab/InternViT-6B-448px-V2_5) | [internlm2_5-20b-chat](https://huggingface.co/internlm/internlm2_5-20b-chat) | [🤗 link](https://huggingface.co/OpenGVLab/InternVL2_5-26B) |

|

| 57 |

+

| InternVL2_5-38B | [InternViT-6B-448px-V2_5](https://huggingface.co/OpenGVLab/InternViT-6B-448px-V2_5) | [Qwen2.5-32B-Instruct](https://huggingface.co/Qwen/Qwen2.5-32B-Instruct) | [🤗 link](https://huggingface.co/OpenGVLab/InternVL2_5-38B) |

|

| 58 |

+

| InternVL2_5-78B | [InternViT-6B-448px-V2_5](https://huggingface.co/OpenGVLab/InternViT-6B-448px-V2_5) | [Qwen2.5-72B-Instruct](https://huggingface.co/Qwen/Qwen2.5-72B-Instruct) | [🤗 link](https://huggingface.co/OpenGVLab/InternVL2_5-78B) |

|

| 59 |

+

|

| 60 |

+

## Model Architecture

|

| 61 |

+

|

| 62 |

+

As shown in the following figure, InternVL 2.5 retains the same model architecture as its predecessors, InternVL 1.5 and 2.0, following the "ViT-MLP-LLM" paradigm. In this new version, we integrate a newly incrementally pre-trained InternViT with various pre-trained LLMs, including InternLM 2.5 and Qwen 2.5, using a randomly initialized MLP projector.

|

| 63 |

+

|

| 64 |

+

|

| 65 |

+

|

| 66 |

+

As in the previous version, we applied a pixel unshuffle operation, reducing the number of visual tokens to one-quarter of the original. Besides, we adopted a similar dynamic resolution strategy as InternVL 1.5, dividing images into tiles of 448×448 pixels. The key difference, starting from InternVL 2.0, is that we additionally introduced support for multi-image and video data.

|

| 67 |

+

|

| 68 |

+

## Training Strategy

|

| 69 |

+

|

| 70 |

+

### Dynamic High-Resolution for Multimodal Data

|

| 71 |

+

|

| 72 |

+

In InternVL 2.0 and 2.5, we extend the dynamic high-resolution training approach, enhancing its capabilities to handle multi-image and video datasets.

|

| 73 |

+

|

| 74 |

+

|

| 75 |

+

|

| 76 |

+

- For single-image datasets, the total number of tiles `n_max` are allocated to a single image for maximum resolution. Visual tokens are enclosed in `<img>` and `</img>` tags.

|

| 77 |

+

|

| 78 |

+

- For multi-image datasets, the total number of tiles `n_max` are distributed across all images in a sample. Each image is labeled with auxiliary tags like `Image-1` and enclosed in `<img>` and `</img>` tags.

|

| 79 |

+

|

| 80 |

+

- For videos, each frame is resized to 448×448. Frames are labeled with tags like `Frame-1` and enclosed in `<img>` and `</img>` tags, similar to images.

|

| 81 |

+

|

| 82 |

+

### Single Model Training Pipeline

|

| 83 |

+

|

| 84 |

+

The training pipeline for a single model in InternVL 2.5 is structured across three stages, designed to enhance the model's visual perception and multimodal capabilities.

|

| 85 |

+

|

| 86 |

+

|

| 87 |

+

|

| 88 |

+

- **Stage 1: MLP Warmup.** In this stage, only the MLP projector is trained while the vision encoder and language model are frozen. A dynamic high-resolution training strategy is applied for better performance, despite increased cost. This phase ensures robust cross-modal alignment and prepares the model for stable multimodal training.

|

| 89 |

+

|

| 90 |

+

- **Stage 1.5: ViT Incremental Learning (Optional).** This stage allows incremental training of the vision encoder and MLP projector using the same data as Stage 1. It enhances the encoder’s ability to handle rare domains like multilingual OCR and mathematical charts. Once trained, the encoder can be reused across LLMs without retraining, making this stage optional unless new domains are introduced.

|

| 91 |

+

|

| 92 |

+

- **Stage 2: Full Model Instruction Tuning.** The entire model is trained on high-quality multimodal instruction datasets. Strict data quality controls are enforced to prevent degradation of the LLM, as noisy data can cause issues like repetitive or incorrect outputs. After this stage, the training process is complete.

|

| 93 |

+

|

| 94 |

+

### Progressive Scaling Strategy

|

| 95 |

+

|

| 96 |

+

We introduce a progressive scaling strategy to align the vision encoder with LLMs efficiently. This approach trains with smaller LLMs first (e.g., 20B) to optimize foundational visual capabilities and cross-modal alignment before transferring the vision encoder to larger LLMs (e.g., 72B) without retraining. This reuse skips intermediate stages for larger models.

|

| 97 |

+

|

| 98 |

+

|

| 99 |

+

|

| 100 |

+

Compared to Qwen2-VL's 1.4 trillion tokens, InternVL2.5-78B uses only 120 billion tokens—less than one-tenth. This strategy minimizes redundancy, maximizes pre-trained component reuse, and enables efficient training for complex vision-language tasks.

|

| 101 |

+

|

| 102 |

+

### Training Enhancements

|

| 103 |

+

|

| 104 |

+

To improve real-world adaptability and performance, we introduce two key techniques:

|

| 105 |

+

|

| 106 |

+

- **Random JPEG Compression**: Random JPEG compression with quality levels between 75 and 100 is applied as a data augmentation technique. This simulates image degradation from internet sources, enhancing the model's robustness to noisy images.

|

| 107 |

+

|

| 108 |

+

- **Loss Reweighting**: To balance the NTP loss across responses of different lengths, we use a reweighting strategy called **square averaging**. This method balances contributions from responses of varying lengths, mitigating biases toward longer or shorter responses.

|

| 109 |

+

|

| 110 |

+

### Data Organization

|

| 111 |

+

|

| 112 |

+

#### Dataset Configuration

|

| 113 |

+

|

| 114 |

+

In InternVL 2.0 and 2.5, the organization of the training data is controlled by several key parameters to optimize the balance and distribution of datasets during training.

|

| 115 |

+

|

| 116 |

+

|

| 117 |

+

|

| 118 |

+

- **Data Augmentation:** JPEG compression is applied conditionally: enabled for image datasets to enhance robustness and disabled for video datasets to maintain consistent frame quality.

|

| 119 |

+

|

| 120 |

+

- **Maximum Tile Number:** The parameter `n_max` controls the maximum tiles per dataset. For example, higher values (24–36) are used for multi-image or high-resolution data, lower values (6–12) for standard images, and 1 for videos.

|

| 121 |

+

|

| 122 |

+

- **Repeat Factor:** The repeat factor `r` adjusts dataset sampling frequency. Values below 1 reduce a dataset's weight, while values above 1 increase it. This ensures balanced training across tasks and prevents overfitting or underfitting.

|

| 123 |

+

|

| 124 |

+

#### Data Filtering Pipeline

|

| 125 |

+

|

| 126 |

+

During development, we found that LLMs are highly sensitive to data noise, with even small anomalies—like outliers or repetitive data—causing abnormal behavior during inference. Repetitive generation, especially in long-form or CoT reasoning tasks, proved particularly harmful.

|

| 127 |

+

|

| 128 |

+

|

| 129 |

+

|

| 130 |

+

To address this challenge and support future research, we designed an efficient data filtering pipeline to remove low-quality samples.

|

| 131 |

+

|

| 132 |

+

|

| 133 |

+

|

| 134 |

+

The pipeline includes two modules, for **pure-text data**, three key strategies are used:

|

| 135 |

+

|

| 136 |

+

1. **LLM-Based Quality Scoring**: Each sample is scored (0–10) using a pre-trained LLM with domain-specific prompts. Samples scoring below a threshold (e.g., 7) are removed to ensure high-quality data.

|

| 137 |

+

2. **Repetition Detection**: Repetitive samples are flagged using LLM-based prompts and manually reviewed. Samples scoring below a stricter threshold (e.g., 3) are excluded to avoid repetitive patterns.

|

| 138 |

+

3. **Heuristic Rule-Based Filtering**: Anomalies like abnormal sentence lengths or duplicate lines are detected using rules. Flagged samples undergo manual verification to ensure accuracy before removal.

|

| 139 |

+

|

| 140 |

+

For **multimodal data**, two strategies are used:

|

| 141 |

+

|

| 142 |

+

1. **Repetition Detection**: Repetitive samples in non-academic datasets are flagged and manually reviewed to prevent pattern loops. High-quality datasets are exempt from this process.

|

| 143 |

+

2. **Heuristic Rule-Based Filtering**: Similar rules are applied to detect visual anomalies, with flagged data verified manually to maintain integrity.

|

| 144 |

+

|

| 145 |

+

#### Training Data

|

| 146 |

+

|

| 147 |

+

As shown in the following figure, from InternVL 1.5 to 2.0 and then to 2.5, the fine-tuning data mixture has undergone iterative improvements in scale, quality, and diversity. For more information about the training data, please refer to our technical report.

|

| 148 |

+

|

| 149 |

+

|

| 150 |

+

|

| 151 |

+

## Evaluation on Multimodal Capability

|

| 152 |

+

|

| 153 |

+

### Multimodal Reasoning and Mathematics

|

| 154 |

+

|

| 155 |

+

|

| 156 |

+

|

| 157 |

+

|

| 158 |

+

|

| 159 |

+

### OCR, Chart, and Document Understanding

|

| 160 |

+

|

| 161 |

+

|

| 162 |

+

|

| 163 |

+

### Multi-Image & Real-World Comprehension

|

| 164 |

+

|

| 165 |

+

|

| 166 |

+

|

| 167 |

+

### Comprehensive Multimodal & Hallucination Evaluation

|

| 168 |

+

|

| 169 |

+

|

| 170 |

+

|

| 171 |

+

### Visual Grounding

|

| 172 |

+

|

| 173 |

+

|

| 174 |

+

|

| 175 |

+

### Multimodal Multilingual Understanding

|

| 176 |

+

|

| 177 |

+

|

| 178 |

+

|

| 179 |

+

### Video Understanding

|

| 180 |

+

|

| 181 |

+

|

| 182 |

+

|

| 183 |

+

## Evaluation on Language Capability

|

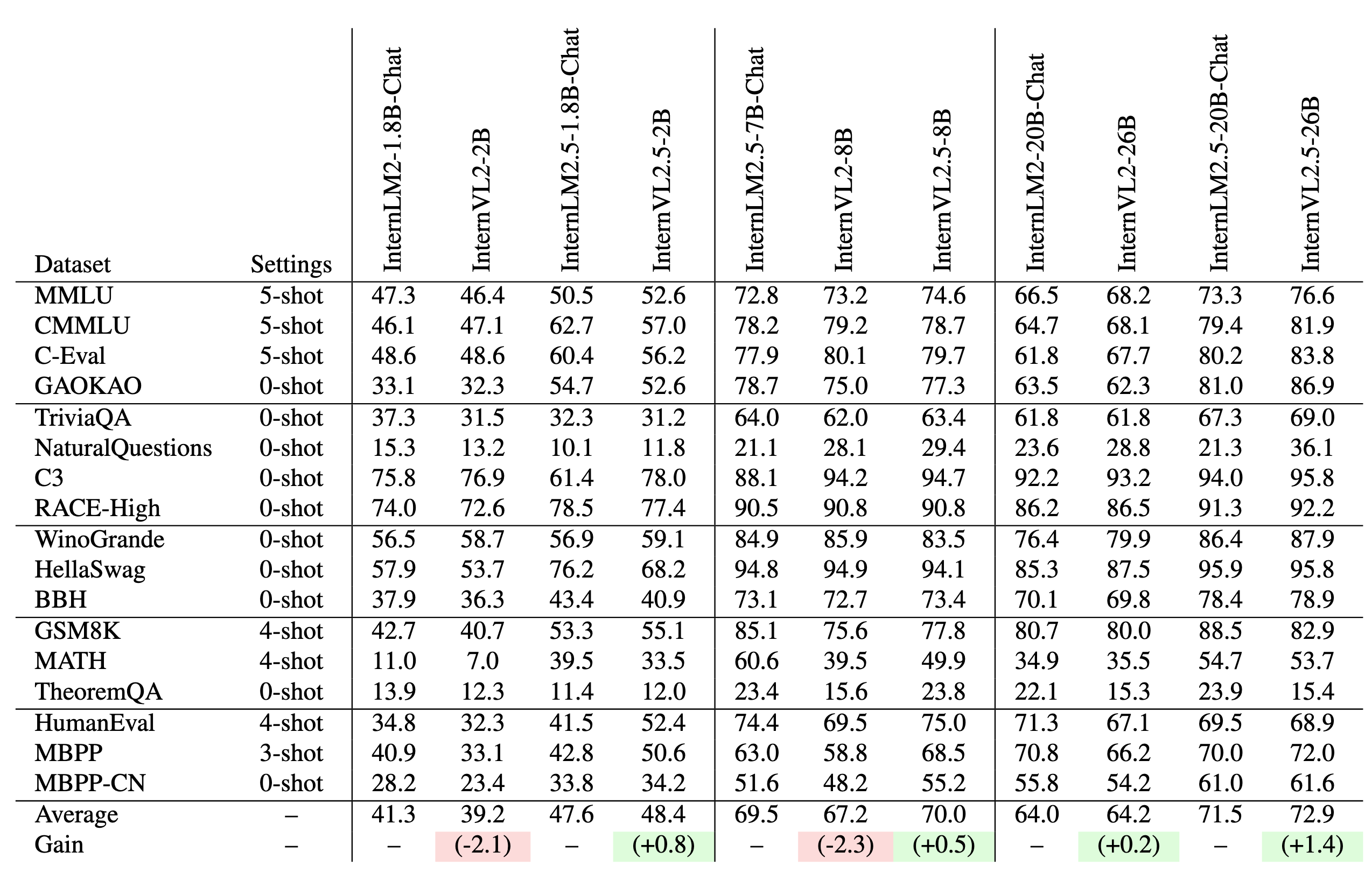

| 184 |

+

|

| 185 |

+

Training InternVL 2.0 models led to a decline in pure language capabilities. InternVL 2.5 addresses this by collecting more high-quality open-source data and filtering out low-quality data, achieving better preservation of pure language performance.

|

| 186 |

+

|

| 187 |

+

|

| 188 |

+

|

| 189 |

+

## Quick Start

|

| 190 |

+

|

| 191 |

+

We provide an example code to run `InternVL2_5-4B` using `transformers`.

|

| 192 |

+

|

| 193 |

+

> Please use transformers>=4.37.2 to ensure the model works normally.

|

| 194 |

+

|

| 195 |

+

### Model Loading

|

| 196 |

+

|

| 197 |

+

#### 16-bit (bf16 / fp16)

|

| 198 |

+

|

| 199 |

+

```python

|

| 200 |

+

import torch

|

| 201 |

+

from transformers import AutoTokenizer, AutoModel

|

| 202 |

+

path = "OpenGVLab/InternVL2_5-4B"

|

| 203 |

+

model = AutoModel.from_pretrained(

|

| 204 |

+

path,

|

| 205 |

+

torch_dtype=torch.bfloat16,

|

| 206 |

+

low_cpu_mem_usage=True,

|

| 207 |

+

use_flash_attn=True,

|

| 208 |

+

trust_remote_code=True).eval().cuda()

|

| 209 |

+

```

|

| 210 |

+

|

| 211 |

+

#### BNB 8-bit Quantization

|

| 212 |

+

|

| 213 |

+

```python

|

| 214 |

+

import torch

|

| 215 |

+

from transformers import AutoTokenizer, AutoModel

|

| 216 |

+

path = "OpenGVLab/InternVL2_5-4B"

|

| 217 |

+

model = AutoModel.from_pretrained(

|

| 218 |

+

path,

|

| 219 |

+

torch_dtype=torch.bfloat16,

|

| 220 |

+

load_in_8bit=True,

|

| 221 |

+

low_cpu_mem_usage=True,

|

| 222 |

+

use_flash_attn=True,

|

| 223 |

+

trust_remote_code=True).eval()

|

| 224 |

+

```

|

| 225 |

+

|

| 226 |

+

#### Multiple GPUs

|

| 227 |

+

|

| 228 |

+

The reason for writing the code this way is to avoid errors that occur during multi-GPU inference due to tensors not being on the same device. By ensuring that the first and last layers of the large language model (LLM) are on the same device, we prevent such errors.

|

| 229 |

+

|

| 230 |

+

```python

|

| 231 |

+

import math

|

| 232 |

+

import torch

|

| 233 |

+

from transformers import AutoTokenizer, AutoModel

|

| 234 |

+

|

| 235 |

+

def split_model(model_name):

|

| 236 |

+

device_map = {}

|

| 237 |

+

world_size = torch.cuda.device_count()

|

| 238 |

+

num_layers = {

|

| 239 |

+

'InternVL2_5-1B': 24, 'InternVL2_5-2B': 24, 'InternVL2_5-4B': 36, 'InternVL2_5-8B': 32,

|

| 240 |

+

'InternVL2_5-26B': 48, 'InternVL2_5-38B': 64, 'InternVL2_5-78B': 80}[model_name]

|

| 241 |

+

# Since the first GPU will be used for ViT, treat it as half a GPU.

|

| 242 |

+

num_layers_per_gpu = math.ceil(num_layers / (world_size - 0.5))

|

| 243 |

+

num_layers_per_gpu = [num_layers_per_gpu] * world_size

|

| 244 |

+

num_layers_per_gpu[0] = math.ceil(num_layers_per_gpu[0] * 0.5)

|

| 245 |

+

layer_cnt = 0

|

| 246 |

+

for i, num_layer in enumerate(num_layers_per_gpu):

|

| 247 |

+

for j in range(num_layer):

|

| 248 |

+

device_map[f'language_model.model.layers.{layer_cnt}'] = i

|

| 249 |

+

layer_cnt += 1

|

| 250 |

+

device_map['vision_model'] = 0

|

| 251 |

+

device_map['mlp1'] = 0

|

| 252 |

+

device_map['language_model.model.tok_embeddings'] = 0

|

| 253 |

+

device_map['language_model.model.embed_tokens'] = 0

|

| 254 |

+

device_map['language_model.output'] = 0

|

| 255 |

+

device_map['language_model.model.norm'] = 0

|

| 256 |

+

device_map['language_model.model.rotary_emb'] = 0

|

| 257 |

+

device_map['language_model.lm_head'] = 0

|

| 258 |

+

device_map[f'language_model.model.layers.{num_layers - 1}'] = 0

|

| 259 |

+

|

| 260 |

+

return device_map

|

| 261 |

+

|

| 262 |

+

path = "OpenGVLab/InternVL2_5-4B"

|

| 263 |

+

device_map = split_model('InternVL2_5-4B')

|

| 264 |

+

model = AutoModel.from_pretrained(

|

| 265 |

+

path,

|

| 266 |

+

torch_dtype=torch.bfloat16,

|

| 267 |

+

low_cpu_mem_usage=True,

|

| 268 |

+

use_flash_attn=True,

|

| 269 |

+

trust_remote_code=True,

|

| 270 |

+

device_map=device_map).eval()

|

| 271 |

+

```

|

| 272 |

+

|

| 273 |

+

### Inference with Transformers

|

| 274 |

+

|

| 275 |

+

```python

|

| 276 |

+

import numpy as np

|

| 277 |

+

import torch

|

| 278 |

+

import torchvision.transforms as T

|

| 279 |

+

from decord import VideoReader, cpu

|

| 280 |

+

from PIL import Image

|

| 281 |

+

from torchvision.transforms.functional import InterpolationMode

|

| 282 |

+

from transformers import AutoModel, AutoTokenizer

|

| 283 |

+

|

| 284 |

+

IMAGENET_MEAN = (0.485, 0.456, 0.406)

|

| 285 |

+

IMAGENET_STD = (0.229, 0.224, 0.225)

|

| 286 |

+

|

| 287 |

+

def build_transform(input_size):

|

| 288 |

+

MEAN, STD = IMAGENET_MEAN, IMAGENET_STD

|

| 289 |

+

transform = T.Compose([

|

| 290 |

+

T.Lambda(lambda img: img.convert('RGB') if img.mode != 'RGB' else img),

|

| 291 |

+

T.Resize((input_size, input_size), interpolation=InterpolationMode.BICUBIC),

|

| 292 |

+

T.ToTensor(),

|

| 293 |

+

T.Normalize(mean=MEAN, std=STD)

|

| 294 |

+

])

|

| 295 |

+

return transform

|

| 296 |

+

|

| 297 |

+

def find_closest_aspect_ratio(aspect_ratio, target_ratios, width, height, image_size):

|

| 298 |

+

best_ratio_diff = float('inf')

|

| 299 |

+

best_ratio = (1, 1)

|

| 300 |

+

area = width * height

|

| 301 |

+

for ratio in target_ratios:

|

| 302 |

+

target_aspect_ratio = ratio[0] / ratio[1]

|

| 303 |

+

ratio_diff = abs(aspect_ratio - target_aspect_ratio)

|

| 304 |

+

if ratio_diff < best_ratio_diff:

|

| 305 |

+

best_ratio_diff = ratio_diff

|

| 306 |

+

best_ratio = ratio

|

| 307 |

+

elif ratio_diff == best_ratio_diff:

|

| 308 |

+

if area > 0.5 * image_size * image_size * ratio[0] * ratio[1]:

|

| 309 |

+

best_ratio = ratio

|

| 310 |

+

return best_ratio

|

| 311 |

+

|

| 312 |

+

def dynamic_preprocess(image, min_num=1, max_num=12, image_size=448, use_thumbnail=False):

|

| 313 |

+

orig_width, orig_height = image.size

|

| 314 |

+

aspect_ratio = orig_width / orig_height

|

| 315 |

+

|

| 316 |

+

# calculate the existing image aspect ratio

|

| 317 |

+

target_ratios = set(

|

| 318 |

+

(i, j) for n in range(min_num, max_num + 1) for i in range(1, n + 1) for j in range(1, n + 1) if

|

| 319 |

+

i * j <= max_num and i * j >= min_num)

|

| 320 |

+

target_ratios = sorted(target_ratios, key=lambda x: x[0] * x[1])

|

| 321 |

+

|

| 322 |

+

# find the closest aspect ratio to the target

|

| 323 |

+

target_aspect_ratio = find_closest_aspect_ratio(

|

| 324 |

+

aspect_ratio, target_ratios, orig_width, orig_height, image_size)

|

| 325 |

+

|

| 326 |

+

# calculate the target width and height

|

| 327 |

+

target_width = image_size * target_aspect_ratio[0]

|

| 328 |

+

target_height = image_size * target_aspect_ratio[1]

|

| 329 |

+

blocks = target_aspect_ratio[0] * target_aspect_ratio[1]

|

| 330 |

+

|

| 331 |

+

# resize the image

|

| 332 |

+

resized_img = image.resize((target_width, target_height))

|

| 333 |

+

processed_images = []

|

| 334 |

+

for i in range(blocks):

|

| 335 |

+

box = (

|

| 336 |

+

(i % (target_width // image_size)) * image_size,

|

| 337 |

+

(i // (target_width // image_size)) * image_size,

|

| 338 |

+

((i % (target_width // image_size)) + 1) * image_size,

|

| 339 |

+

((i // (target_width // image_size)) + 1) * image_size

|

| 340 |

+

)

|

| 341 |

+

# split the image

|

| 342 |

+

split_img = resized_img.crop(box)

|

| 343 |

+

processed_images.append(split_img)

|

| 344 |

+

assert len(processed_images) == blocks

|

| 345 |

+

if use_thumbnail and len(processed_images) != 1:

|

| 346 |

+

thumbnail_img = image.resize((image_size, image_size))

|

| 347 |

+

processed_images.append(thumbnail_img)

|

| 348 |

+

return processed_images

|

| 349 |

+

|

| 350 |

+

def load_image(image_file, input_size=448, max_num=12):

|

| 351 |

+

image = Image.open(image_file).convert('RGB')

|

| 352 |

+

transform = build_transform(input_size=input_size)

|

| 353 |

+

images = dynamic_preprocess(image, image_size=input_size, use_thumbnail=True, max_num=max_num)

|

| 354 |

+

pixel_values = [transform(image) for image in images]

|

| 355 |

+

pixel_values = torch.stack(pixel_values)

|

| 356 |

+

return pixel_values

|

| 357 |

+

|

| 358 |

+

# If you want to load a model using multiple GPUs, please refer to the `Multiple GPUs` section.

|

| 359 |

+

path = 'OpenGVLab/InternVL2_5-4B'

|

| 360 |

+

model = AutoModel.from_pretrained(

|

| 361 |

+

path,

|

| 362 |

+

torch_dtype=torch.bfloat16,

|

| 363 |

+

low_cpu_mem_usage=True,

|

| 364 |

+

use_flash_attn=True,

|

| 365 |

+

trust_remote_code=True).eval().cuda()

|

| 366 |

+

tokenizer = AutoTokenizer.from_pretrained(path, trust_remote_code=True, use_fast=False)

|

| 367 |

+

|

| 368 |

+

# set the max number of tiles in `max_num`

|

| 369 |

+

pixel_values = load_image('./examples/image1.jpg', max_num=12).to(torch.bfloat16).cuda()

|

| 370 |

+

generation_config = dict(max_new_tokens=1024, do_sample=True)

|

| 371 |

+

|

| 372 |

+

# pure-text conversation (纯文本对话)

|

| 373 |

+

question = 'Hello, who are you?'

|

| 374 |

+

response, history = model.chat(tokenizer, None, question, generation_config, history=None, return_history=True)

|

| 375 |

+

print(f'User: {question}\nAssistant: {response}')

|

| 376 |

+

|

| 377 |

+

question = 'Can you tell me a story?'

|

| 378 |

+

response, history = model.chat(tokenizer, None, question, generation_config, history=history, return_history=True)

|

| 379 |

+

print(f'User: {question}\nAssistant: {response}')

|

| 380 |

+

|

| 381 |

+

# single-image single-round conversation (单图单轮对话)

|

| 382 |

+

question = '<image>\nPlease describe the image shortly.'

|

| 383 |

+

response = model.chat(tokenizer, pixel_values, question, generation_config)

|

| 384 |

+

print(f'User: {question}\nAssistant: {response}')

|

| 385 |

+

|

| 386 |

+

# single-image multi-round conversation (单图多轮对话)

|

| 387 |

+

question = '<image>\nPlease describe the image in detail.'

|

| 388 |

+

response, history = model.chat(tokenizer, pixel_values, question, generation_config, history=None, return_history=True)

|

| 389 |

+

print(f'User: {question}\nAssistant: {response}')

|

| 390 |

+

|

| 391 |

+

question = 'Please write a poem according to the image.'

|

| 392 |

+

response, history = model.chat(tokenizer, pixel_values, question, generation_config, history=history, return_history=True)

|

| 393 |

+

print(f'User: {question}\nAssistant: {response}')

|

| 394 |

+

|

| 395 |

+

# multi-image multi-round conversation, combined images (多图多轮对话,拼接图像)

|

| 396 |

+

pixel_values1 = load_image('./examples/image1.jpg', max_num=12).to(torch.bfloat16).cuda()

|

| 397 |

+

pixel_values2 = load_image('./examples/image2.jpg', max_num=12).to(torch.bfloat16).cuda()

|

| 398 |

+

pixel_values = torch.cat((pixel_values1, pixel_values2), dim=0)

|

| 399 |

+

|

| 400 |

+

question = '<image>\nDescribe the two images in detail.'

|

| 401 |

+

response, history = model.chat(tokenizer, pixel_values, question, generation_config,

|

| 402 |

+

history=None, return_history=True)

|

| 403 |

+

print(f'User: {question}\nAssistant: {response}')

|

| 404 |

+

|

| 405 |

+

question = 'What are the similarities and differences between these two images.'

|

| 406 |

+

response, history = model.chat(tokenizer, pixel_values, question, generation_config,

|

| 407 |

+

history=history, return_history=True)

|

| 408 |

+

print(f'User: {question}\nAssistant: {response}')

|

| 409 |

+

|

| 410 |

+

# multi-image multi-round conversation, separate images (多图多轮对话,独立图像)

|

| 411 |

+

pixel_values1 = load_image('./examples/image1.jpg', max_num=12).to(torch.bfloat16).cuda()

|

| 412 |

+

pixel_values2 = load_image('./examples/image2.jpg', max_num=12).to(torch.bfloat16).cuda()

|

| 413 |

+

pixel_values = torch.cat((pixel_values1, pixel_values2), dim=0)

|

| 414 |

+

num_patches_list = [pixel_values1.size(0), pixel_values2.size(0)]

|

| 415 |

+

|

| 416 |

+

question = 'Image-1: <image>\nImage-2: <image>\nDescribe the two images in detail.'

|

| 417 |

+

response, history = model.chat(tokenizer, pixel_values, question, generation_config,

|

| 418 |

+

num_patches_list=num_patches_list,

|

| 419 |

+

history=None, return_history=True)

|

| 420 |

+

print(f'User: {question}\nAssistant: {response}')

|

| 421 |

+

|

| 422 |

+

question = 'What are the similarities and differences between these two images.'

|

| 423 |

+

response, history = model.chat(tokenizer, pixel_values, question, generation_config,

|

| 424 |

+

num_patches_list=num_patches_list,

|

| 425 |

+

history=history, return_history=True)

|

| 426 |

+

print(f'User: {question}\nAssistant: {response}')

|

| 427 |

+

|

| 428 |

+

# batch inference, single image per sample (单图批处理)

|

| 429 |

+

pixel_values1 = load_image('./examples/image1.jpg', max_num=12).to(torch.bfloat16).cuda()

|

| 430 |

+

pixel_values2 = load_image('./examples/image2.jpg', max_num=12).to(torch.bfloat16).cuda()

|

| 431 |

+

num_patches_list = [pixel_values1.size(0), pixel_values2.size(0)]

|

| 432 |

+

pixel_values = torch.cat((pixel_values1, pixel_values2), dim=0)

|

| 433 |

+

|

| 434 |

+

questions = ['<image>\nDescribe the image in detail.'] * len(num_patches_list)

|

| 435 |

+

responses = model.batch_chat(tokenizer, pixel_values,

|

| 436 |

+

num_patches_list=num_patches_list,

|

| 437 |

+

questions=questions,

|

| 438 |

+

generation_config=generation_config)

|

| 439 |

+

for question, response in zip(questions, responses):

|

| 440 |

+

print(f'User: {question}\nAssistant: {response}')

|

| 441 |

+

|

| 442 |

+

# video multi-round conversation (视频多轮对话)

|

| 443 |

+

def get_index(bound, fps, max_frame, first_idx=0, num_segments=32):

|

| 444 |

+

if bound:

|

| 445 |

+

start, end = bound[0], bound[1]

|

| 446 |

+

else:

|

| 447 |

+

start, end = -100000, 100000

|

| 448 |

+

start_idx = max(first_idx, round(start * fps))

|

| 449 |

+

end_idx = min(round(end * fps), max_frame)

|

| 450 |

+

seg_size = float(end_idx - start_idx) / num_segments

|

| 451 |

+

frame_indices = np.array([

|

| 452 |

+

int(start_idx + (seg_size / 2) + np.round(seg_size * idx))

|

| 453 |

+

for idx in range(num_segments)

|

| 454 |

+

])

|

| 455 |

+

return frame_indices

|

| 456 |

+

|

| 457 |

+

def load_video(video_path, bound=None, input_size=448, max_num=1, num_segments=32):

|

| 458 |

+

vr = VideoReader(video_path, ctx=cpu(0), num_threads=1)

|

| 459 |

+

max_frame = len(vr) - 1

|

| 460 |

+

fps = float(vr.get_avg_fps())

|

| 461 |

+

|

| 462 |

+

pixel_values_list, num_patches_list = [], []

|

| 463 |

+

transform = build_transform(input_size=input_size)

|

| 464 |

+

frame_indices = get_index(bound, fps, max_frame, first_idx=0, num_segments=num_segments)

|

| 465 |

+

for frame_index in frame_indices:

|

| 466 |

+

img = Image.fromarray(vr[frame_index].asnumpy()).convert('RGB')

|

| 467 |

+

img = dynamic_preprocess(img, image_size=input_size, use_thumbnail=True, max_num=max_num)

|

| 468 |

+

pixel_values = [transform(tile) for tile in img]

|

| 469 |

+

pixel_values = torch.stack(pixel_values)

|

| 470 |

+

num_patches_list.append(pixel_values.shape[0])

|

| 471 |

+

pixel_values_list.append(pixel_values)

|

| 472 |

+

pixel_values = torch.cat(pixel_values_list)

|

| 473 |

+

return pixel_values, num_patches_list

|

| 474 |

+

|

| 475 |

+

video_path = './examples/red-panda.mp4'

|

| 476 |

+

pixel_values, num_patches_list = load_video(video_path, num_segments=8, max_num=1)

|

| 477 |

+

pixel_values = pixel_values.to(torch.bfloat16).cuda()

|

| 478 |

+

video_prefix = ''.join([f'Frame{i+1}: <image>\n' for i in range(len(num_patches_list))])

|

| 479 |

+

question = video_prefix + 'What is the red panda doing?'

|

| 480 |

+

# Frame1: <image>\nFrame2: <image>\n...\nFrame8: <image>\n{question}

|

| 481 |

+

response, history = model.chat(tokenizer, pixel_values, question, generation_config,

|

| 482 |

+

num_patches_list=num_patches_list, history=None, return_history=True)

|

| 483 |

+

print(f'User: {question}\nAssistant: {response}')

|

| 484 |

+

|

| 485 |

+

question = 'Describe this video in detail.'

|

| 486 |

+

response, history = model.chat(tokenizer, pixel_values, question, generation_config,

|

| 487 |

+

num_patches_list=num_patches_list, history=history, return_history=True)

|

| 488 |

+

print(f'User: {question}\nAssistant: {response}')

|

| 489 |

+

```

|

| 490 |

+

|

| 491 |

+

#### Streaming Output

|

| 492 |

+

|

| 493 |

+

Besides this method, you can also use the following code to get streamed output.

|

| 494 |

+

|

| 495 |

+

```python

|

| 496 |

+

from transformers import TextIteratorStreamer

|

| 497 |

+

from threading import Thread

|

| 498 |

+

|

| 499 |

+

# Initialize the streamer

|

| 500 |

+

streamer = TextIteratorStreamer(tokenizer, skip_prompt=True, skip_special_tokens=True, timeout=10)

|

| 501 |

+

# Define the generation configuration

|

| 502 |

+

generation_config = dict(max_new_tokens=1024, do_sample=False, streamer=streamer)

|

| 503 |

+

# Start the model chat in a separate thread

|

| 504 |

+

thread = Thread(target=model.chat, kwargs=dict(

|

| 505 |

+

tokenizer=tokenizer, pixel_values=pixel_values, question=question,

|

| 506 |

+

history=None, return_history=False, generation_config=generation_config,

|

| 507 |

+

))

|

| 508 |

+

thread.start()

|

| 509 |

+

|

| 510 |

+

# Initialize an empty string to store the generated text

|

| 511 |

+

generated_text = ''

|

| 512 |

+

# Loop through the streamer to get the new text as it is generated

|

| 513 |

+

for new_text in streamer:

|

| 514 |

+

if new_text == model.conv_template.sep:

|

| 515 |

+

break

|

| 516 |

+

generated_text += new_text

|

| 517 |

+

print(new_text, end='', flush=True) # Print each new chunk of generated text on the same line

|

| 518 |

+

```

|

| 519 |

+

|

| 520 |

+

## Finetune

|

| 521 |

+

|

| 522 |

+

Many repositories now support fine-tuning of the InternVL series models, including [InternVL](https://github.com/OpenGVLab/InternVL), [SWIFT](https://github.com/modelscope/ms-swift), [XTurner](https://github.com/InternLM/xtuner), and others. Please refer to their documentation for more details on fine-tuning.

|

| 523 |

+

|

| 524 |

+

## Deployment

|

| 525 |

+

|

| 526 |

+

### LMDeploy

|

| 527 |

+

|

| 528 |

+

LMDeploy is a toolkit for compressing, deploying, and serving LLMs & VLMs.

|

| 529 |

+

|

| 530 |

+

```sh

|

| 531 |

+

pip install lmdeploy>=0.6.4

|

| 532 |

+

```

|

| 533 |

+

|

| 534 |

+

LMDeploy abstracts the complex inference process of multi-modal Vision-Language Models (VLM) into an easy-to-use pipeline, similar to the Large Language Model (LLM) inference pipeline.

|

| 535 |

+

|

| 536 |

+

#### A 'Hello, world' Example

|

| 537 |

+

|

| 538 |

+

```python

|

| 539 |

+

from lmdeploy import pipeline, TurbomindEngineConfig

|

| 540 |

+

from lmdeploy.vl import load_image

|

| 541 |

+

|

| 542 |

+

model = 'OpenGVLab/InternVL2_5-4B'

|

| 543 |

+

image = load_image('https://raw.githubusercontent.com/open-mmlab/mmdeploy/main/tests/data/tiger.jpeg')

|

| 544 |

+

pipe = pipeline(model, backend_config=TurbomindEngineConfig(session_len=8192))

|

| 545 |

+

response = pipe(('describe this image', image))

|

| 546 |

+

print(response.text)

|

| 547 |

+

```

|

| 548 |

+

|

| 549 |

+

If `ImportError` occurs while executing this case, please install the required dependency packages as prompted.

|

| 550 |

+

|

| 551 |

+

#### Multi-images Inference

|

| 552 |

+

|

| 553 |

+

When dealing with multiple images, you can put them all in one list. Keep in mind that multiple images will lead to a higher number of input tokens, and as a result, the size of the context window typically needs to be increased.

|

| 554 |

+

|

| 555 |

+

```python

|

| 556 |

+

from lmdeploy import pipeline, TurbomindEngineConfig

|

| 557 |

+

from lmdeploy.vl import load_image

|

| 558 |

+

from lmdeploy.vl.constants import IMAGE_TOKEN

|

| 559 |

+

|

| 560 |

+

model = 'OpenGVLab/InternVL2_5-4B'

|

| 561 |

+

pipe = pipeline(model, backend_config=TurbomindEngineConfig(session_len=8192))

|

| 562 |

+

|

| 563 |

+

image_urls=[

|

| 564 |

+

'https://raw.githubusercontent.com/open-mmlab/mmdeploy/main/demo/resources/human-pose.jpg',

|

| 565 |

+

'https://raw.githubusercontent.com/open-mmlab/mmdeploy/main/demo/resources/det.jpg'

|

| 566 |

+

]

|

| 567 |

+

|

| 568 |

+

images = [load_image(img_url) for img_url in image_urls]

|

| 569 |

+

# Numbering images improves multi-image conversations

|

| 570 |

+

response = pipe((f'Image-1: {IMAGE_TOKEN}\nImage-2: {IMAGE_TOKEN}\ndescribe these two images', images))

|

| 571 |

+

print(response.text)

|

| 572 |

+

```

|

| 573 |

+

|

| 574 |

+

#### Batch Prompts Inference

|

| 575 |

+

|

| 576 |

+

Conducting inference with batch prompts is quite straightforward; just place them within a list structure:

|

| 577 |

+

|

| 578 |

+

```python

|

| 579 |

+

from lmdeploy import pipeline, TurbomindEngineConfig

|

| 580 |

+

from lmdeploy.vl import load_image

|

| 581 |

+

|

| 582 |

+

model = 'OpenGVLab/InternVL2_5-4B'

|

| 583 |

+

pipe = pipeline(model, backend_config=TurbomindEngineConfig(session_len=8192))

|

| 584 |

+

|

| 585 |

+

image_urls=[

|

| 586 |

+

"https://raw.githubusercontent.com/open-mmlab/mmdeploy/main/demo/resources/human-pose.jpg",

|

| 587 |

+

"https://raw.githubusercontent.com/open-mmlab/mmdeploy/main/demo/resources/det.jpg"

|

| 588 |

+

]

|

| 589 |

+

prompts = [('describe this image', load_image(img_url)) for img_url in image_urls]

|

| 590 |

+

response = pipe(prompts)

|

| 591 |

+

print(response)

|

| 592 |

+

```

|

| 593 |

+

|

| 594 |

+

#### Multi-turn Conversation

|

| 595 |

+

|

| 596 |

+

There are two ways to do the multi-turn conversations with the pipeline. One is to construct messages according to the format of OpenAI and use above introduced method, the other is to use the `pipeline.chat` interface.

|

| 597 |

+

|

| 598 |

+

```python

|

| 599 |

+

from lmdeploy import pipeline, TurbomindEngineConfig, GenerationConfig

|

| 600 |

+

from lmdeploy.vl import load_image

|

| 601 |

+

|

| 602 |

+

model = 'OpenGVLab/InternVL2_5-4B'

|

| 603 |

+

pipe = pipeline(model, backend_config=TurbomindEngineConfig(session_len=8192))

|

| 604 |

+

|

| 605 |

+

image = load_image('https://raw.githubusercontent.com/open-mmlab/mmdeploy/main/demo/resources/human-pose.jpg')

|

| 606 |

+

gen_config = GenerationConfig(top_k=40, top_p=0.8, temperature=0.8)

|

| 607 |

+

sess = pipe.chat(('describe this image', image), gen_config=gen_config)

|

| 608 |

+

print(sess.response.text)

|

| 609 |

+

sess = pipe.chat('What is the woman doing?', session=sess, gen_config=gen_config)

|

| 610 |

+

print(sess.response.text)

|

| 611 |

+

```

|

| 612 |

+

|

| 613 |

+

#### Service

|

| 614 |

+

|

| 615 |

+

LMDeploy's `api_server` enables models to be easily packed into services with a single command. The provided RESTful APIs are compatible with OpenAI's interfaces. Below are an example of service startup:

|

| 616 |

+

|

| 617 |

+

```shell

|

| 618 |

+

lmdeploy serve api_server OpenGVLab/InternVL2_5-4B --server-port 23333

|

| 619 |

+

```

|

| 620 |

+

|

| 621 |

+

To use the OpenAI-style interface, you need to install OpenAI:

|

| 622 |

+

|

| 623 |

+

```shell

|

| 624 |

+

pip install openai

|

| 625 |

+

```

|

| 626 |

+

|

| 627 |

+

Then, use the code below to make the API call:

|

| 628 |

+

|

| 629 |

+

```python

|

| 630 |

+

from openai import OpenAI

|

| 631 |

+

|

| 632 |

+

client = OpenAI(api_key='YOUR_API_KEY', base_url='http://0.0.0.0:23333/v1')

|

| 633 |

+

model_name = client.models.list().data[0].id

|

| 634 |

+

response = client.chat.completions.create(

|

| 635 |

+

model=model_name,

|

| 636 |

+

messages=[{

|

| 637 |

+

'role':

|

| 638 |

+

'user',

|

| 639 |

+

'content': [{

|

| 640 |

+

'type': 'text',

|

| 641 |

+

'text': 'describe this image',

|

| 642 |

+

}, {

|

| 643 |

+

'type': 'image_url',

|

| 644 |

+

'image_url': {

|

| 645 |

+

'url':

|

| 646 |

+

'https://modelscope.oss-cn-beijing.aliyuncs.com/resource/tiger.jpeg',

|

| 647 |

+

},

|

| 648 |

+

}],

|

| 649 |

+

}],

|

| 650 |

+

temperature=0.8,

|

| 651 |

+

top_p=0.8)

|

| 652 |

+

print(response)

|

| 653 |

+

```

|

| 654 |

+

|

| 655 |

+

## License

|

| 656 |

+

|

| 657 |

+

This project is released under the MIT License. This project uses the pre-trained Qwen2.5-3B-Instruct as a component, which is licensed under the Apache License 2.0.

|

| 658 |

+

|

| 659 |

+

## Citation

|

| 660 |

+

|

| 661 |

+

If you find this project useful in your research, please consider citing:

|

| 662 |

+

|

| 663 |

+

```BibTeX

|

| 664 |

+

@article{chen2024expanding,

|

| 665 |

+

title={Expanding Performance Boundaries of Open-Source Multimodal Models with Model, Data, and Test-Time Scaling},

|

| 666 |

+

author={Chen, Zhe and Wang, Weiyun and Cao, Yue and Liu, Yangzhou and Gao, Zhangwei and Cui, Erfei and Zhu, Jinguo and Ye, Shenglong and Tian, Hao and Liu, Zhaoyang and others},

|

| 667 |

+

journal={arXiv preprint arXiv:2412.05271},

|

| 668 |

+

year={2024}

|

| 669 |

+

}

|

| 670 |

+

@article{gao2024mini,

|

| 671 |

+

title={Mini-internvl: A flexible-transfer pocket multimodal model with 5\% parameters and 90\% performance},

|

| 672 |

+

author={Gao, Zhangwei and Chen, Zhe and Cui, Erfei and Ren, Yiming and Wang, Weiyun and Zhu, Jinguo and Tian, Hao and Ye, Shenglong and He, Junjun and Zhu, Xizhou and others},

|

| 673 |

+

journal={arXiv preprint arXiv:2410.16261},

|

| 674 |

+

year={2024}

|

| 675 |

+

}

|

| 676 |

+

@article{chen2024far,

|

| 677 |

+

title={How Far Are We to GPT-4V? Closing the Gap to Commercial Multimodal Models with Open-Source Suites},

|

| 678 |

+

author={Chen, Zhe and Wang, Weiyun and Tian, Hao and Ye, Shenglong and Gao, Zhangwei and Cui, Erfei and Tong, Wenwen and Hu, Kongzhi and Luo, Jiapeng and Ma, Zheng and others},

|

| 679 |

+

journal={arXiv preprint arXiv:2404.16821},

|

| 680 |

+

year={2024}

|

| 681 |

+

}

|

| 682 |

+

@inproceedings{chen2024internvl,

|

| 683 |

+

title={Internvl: Scaling up vision foundation models and aligning for generic visual-linguistic tasks},

|

| 684 |

+

author={Chen, Zhe and Wu, Jiannan and Wang, Wenhai and Su, Weijie and Chen, Guo and Xing, Sen and Zhong, Muyan and Zhang, Qinglong and Zhu, Xizhou and Lu, Lewei and others},

|

| 685 |

+

booktitle={Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition},

|

| 686 |

+

pages={24185--24198},

|

| 687 |

+

year={2024}

|

| 688 |

+

}

|

| 689 |

+

```

|